What Is Black Box AI? The Black Box Problem Explained

In April 2026, Vectara's hallucination leaderboard delivered an awkward verdict. Top language models now hallucinate less than 4% of the time on its core test. But the new "reasoning" variants of GPT-5, Claude Sonnet 4.5, Grok-4, and Gemini-3-Pro all hallucinate at over 10% on the new dataset. Grok-4-fast-reasoning hit 20.2%. The smartest models, the ones that "think" before answering, are the worst at telling you when they don't know.

That is the black box problem in one paragraph. We have built AI systems that often produce useful outputs and that no one, including their creators, can fully explain. They are precise without being calibrated, fluent without being honest, and confident without being correct.

Regulators noticed. The EU AI Act now levies fines of up to EUR 35 million or 7% of global turnover for prohibited uses, with high-risk system rules taking effect on 2 August 2026. The US Consumer Financial Protection Bureau told banks plainly that they cannot use complex algorithms if those algorithms stop them from explaining a credit denial. And the field of explainable AI, a niche topic five years ago, is now a market estimated between $9 billion and $13 billion in 2026.

This guide walks through what black box AI actually is, why even simple-looking AI models become black boxes, what goes wrong when they do, where they show up in crypto and fintech, how the explainable AI toolkit (SHAP, LIME, counterfactuals) tries to crack them open, and what you should know about the new regulatory regime in the EU and the US. There is also a short detour to clear up a recurring confusion: Blackbox AI, the coding assistant at blackbox.ai, is a different thing.

What Is Black Box AI and Why It Matters

Black box AI refers to any AI system whose inner reasoning is opaque to users and, often, to the developers who built it. Inputs and outputs are visible. The path between them is hidden inside layers of weights, learned patterns, and machine learning transformations that no human can fully read. The same applies whether the model handles tabular data, images, or natural language processing tasks like translation and chat.

The label is older than modern deep learning. Engineers have used "black box" since at least the 1960s for any system you can poke from the outside but not unscrew. The human brain itself, biologists like to point out, is also a black box, but the comparison only goes so far: an AI does not work like a human, and assuming it does is one of the fastest ways to misjudge what black box AI refers to. What changed in the last decade is the scale. A modern large language model can carry hundreds of billions of parameters. A typical deep neural network spreads "knowledge" across thousands of layers and millions of attention heads, with single neurons encoding multiple unrelated patterns at once. Researchers call this last property polysemanticity, and it is one of the reasons mechanistic interpretability is still in its early days.

Why should anyone outside research labs care? Because black box AI is now driving consequential decisions. It approves and denies credit. It scores defendants. It flags transactions as fraud. It runs a large share of the trading volume on every major crypto exchange. When it gets something wrong, opacity makes it almost impossible to find out why, fix it, or hold anyone accountable.

It also matters because AI governance no longer treats opacity as a developer's problem. The EU now treats it as a market-access question. US regulators treat it as a fair-lending question. Every executive who has signed off on an AI initiative since 2024 has run into the same wall: what is this thing actually doing, and why? Understanding black box AI is no longer optional, and you cannot solve the black box problem by simply using a different vendor.

Why AI Models Become Black Boxes

Not every AI is a black box. A simple decision tree is fully transparent. A linear regression model spits out coefficients you can read. Even a rule-based AI system from the 1990s is, in principle, auditable line by line.

So how do today's AI models become black boxes? It happens for four overlapping reasons.

First, scale. Deep learning models with millions or billions of parameters operate in high-dimensional spaces that humans cannot visualize. You can describe a 200-billion-parameter model in math, but no one can hold it in their head.

Second, distributed representations. In a deep neural network, no single neuron stores "the concept of cat" or "the rule for rejecting a loan." Concepts smear across thousands of neurons, and individual neurons participate in many concepts simultaneously. Pulling out a clean explanation is a research project, not a query.

Third, training-data dependence. The model's behavior is shaped by its training data, which is usually proprietary, gigantic, and sometimes legally fraught. Even when a developer publishes the model weights, the data is rarely shared. So a key part of the "why" is missing.

Fourth, intent. There are practical reasons to use black box approaches deliberately. Some AI developers and programmers obscure model internals on purpose to protect intellectual property, and other reasons to use black box designs include licensing terms and competitive moats. Even an open-weight model can effectively make a black box around its decisions, because most modern models rely on emergent patterns no documentation captures. A company that has invested $100 million in a model is not eager to publish its architecture and training procedure. Open-source AI models that share their underlying code are ultimately black boxes too, because users still cannot inspect the learned weights with any meaningful interpretation.

The result is that even seemingly simple advanced AI models, including LLMs and generative AI models, become black boxes by default. Transparent models are the exception, not the rule. Complex black boxes can deliver impressive accuracy, which is why teams keep deploying them despite the opacity. The same is true for black box AI models trained on rich, messy data: the lift over rule-based AI models is often big enough to overrule the explainability concerns until something breaks. Most modern black box AIs are ultimately black boxes because users still cannot inspect the learned weights. Open-weight models share their underlying code, and users can read the architecture, but the underlying code are ultimately black boxes when you ask "why did the model say that".

The Black Box Problem in Deep Learning

The black box problem is what you get when those four reasons compound. The model works, often impressively. But it works in a way that resists three things at once: explanation, validation, and correction.

Take the classic illustration: a deep learning model trained to identify pandas. It scores 99% on the test set. It looks great. Then someone runs an interpretability tool and discovers the model is not really looking at the panda. It is paying attention to the bamboo. Most photographs of pandas in the training data also contain bamboo. The model learned a shortcut. On a panda photograph without bamboo, the model fails.

This kind of "shortcut learning" is everywhere in deep learning. The model finds a statistical regularity that does not match the underlying concept, but you only notice when the world looks slightly different from the training set. The 2008 financial crisis is the historical analogy here. Value-at-Risk models built on Gaussian assumptions worked beautifully in normal markets and exploded in tail conditions, because they had learned shortcuts the modelers did not realize were shortcuts.

Today's deep learning models share that exact failure mode, with more parameters and more confidence. Mechanistic interpretability researchers, including teams at Anthropic and OpenAI, have started reverse-engineering small parts of language models neuron by neuron. Their work shows the inside of an LLM is closer to entangled circuits than tidy logic. There is no point at which you can put your finger and say "this is where the answer lives." The black box problem is not a bug to be fixed; it is structural.

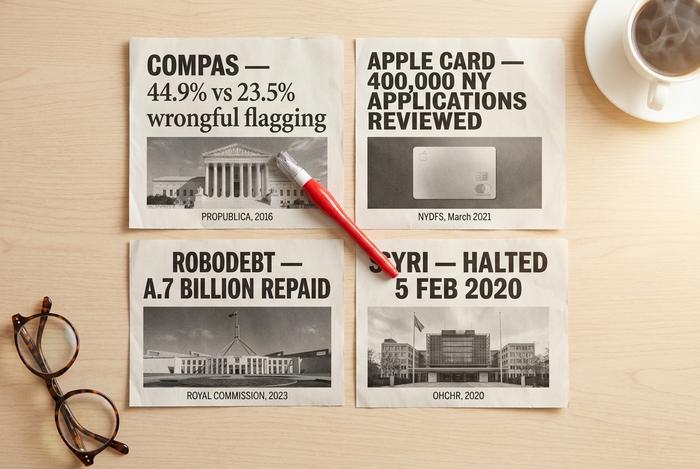

Black Box AI Examples: COMPAS, Apple Card, Robodebt, SyRI

Want to see what black box AI looks like when it falls over? The four cases everyone keeps citing tell you most of what you need to know. They span criminal justice, banking, and welfare. They all hurt real people. And no two failed quite the same way.

Start with COMPAS. Northpointe built it to predict whether a defendant would re-offend, and US courts rolled it out widely. Then ProPublica looked under the hood. Their 2016 audit ran 7,000-plus Broward County arrestees through the data and the result was ugly: Black defendants got wrongly flagged as high-risk in 44.9% of cases, while the figure for white defendants was just 23.5%. A 2024 follow-up paper made it worse. Two features (age and prior convictions) matched the accuracy of COMPAS's 137. So the complexity bought literally no extra signal, but it did make the bias much harder to spot. That is the canonical black box model that evaluates people instead of products. The box model that evaluates job applicants, the one Amazon scrapped in 2018, fits the same shape.

Then Apple Card, late 2019. Wozniak said his wife got a credit limit 10x lower than his. David Heinemeier Hansson said the same. The story went viral. The New York Department of Financial Services took it seriously: they pulled roughly 400,000 applications and looked. March 2021, they came back with a verdict of no statutory gender discrimination. But they also wrote, and this is the part that matters, that the customer-experience opacity was a trust problem in its own right. Black box harm, it turns out, is half about outcomes and half about perception. A press release does not fix the perception side.

Robodebt is the other side of the coin. No deep neural network involved. Australia ran an income-averaging rule against welfare records between 2016 and 2019, accused roughly 400,000 recipients of fraud, and could not coherently explain the calculation to anyone who got the letter. A Royal Commission later called the scheme unlawful. The government paid back A$1.7 billion plus another A$112 million in compensation. Lesson: a system does not need to be technically sophisticated to be a black box. It just needs to be unaccountable.

The Dutch toeslagenaffaire and SyRI are the European bookend. On 5 February 2020, a Dutch court ordered the immediate halt of SyRI, ruling that its opacity violated Article 8 of the European Convention on Human Rights. The related childcare-benefit scandal swept up over 20,000 parents who were wrongly accused of fraud. The Rutte government resigned over it in January 2021. That ruling is now the standard reference point in EU policy circles for why opaque AI in high-stakes settings is not a soft ethics issue, it is a legal one.

Four cases. Different sectors, different technologies, different countries. Same pattern: an opaque system, a consequential decision, and people on the receiving end with no real way to push back.

Black Box AI Risk in Real-World AI Systems

Once you start cataloguing black box AI risk in real-world AI systems, a pattern shows up. The same five risks appear again and again, regardless of whether the model is a credit scorer, a chatbot, or an algorithmic trading system.

| Risk | What it looks like | Why it scales |

|---|---|---|

| Hidden bias | Model treats protected groups differently | Training data carries historical patterns |

| Hallucination | Model invents facts or citations | LLMs optimize for fluency, not truth |

| Shortcut learning | Model relies on irrelevant correlations | Easier to learn than the real concept |

| Adversarial fragility | Small input change flips output | High-dimensional decision boundaries |

| Audit breakdown | Cannot reconstruct the why | No interpretable internal state |

These risks compound across black box AI systems used in finance, hiring, healthcare, and crypto. The complex deep learning processes inside them make it difficult to predict where the next failure will land, and traditional AI tools for QA were not built for models with hundreds of billions of parameters.

Hidden bias gets the headlines, but adversarial fragility and audit breakdown are the bigger long-term issues. A stable bias can at least be measured and corrected. A model that fails differently every time you run it (which ChatGPT does, on roughly 42% of smart-contract evaluation tasks per a 2024 ACM TOSEM study) is much harder to certify for regulated use.

The newest entrant to this list is what researchers call "agentic AI risk." When you wire an LLM into tools, give it memory, and let it call APIs, you compound the opacity. A single decision is now a chain of model invocations, retrieved documents, and tool calls, each of them partially opaque. Modern agents are black boxes inside black boxes.

Black Box AI in Crypto and Fintech

Of every industry that uses black box AI, crypto and fintech are where the deployment side and the risk side collide hardest. Stakes are large. Latency is short. Disclosure is thin. Regulation, especially in crypto, is still a patchwork. The result is an environment that rewards deploying first and writing the documentation afterward.

Algorithmic trading. Algorithmic trading drove an estimated 70-80% of crypto volume in 2025, higher than the 60-70% share in major equity markets. Wintermute alone moves more than $15 billion across 60-plus venues on an average day, with a record $2.24 billion single-day volume reported in 2025. The strategies behind those flows are deep-learning ensembles that no outside observer can audit. The Alameda/FTX collapse in November 2022 is the cleanest illustration of the risk: total crypto market capitalization fell from over $1 trillion to under $800 billion in roughly a month, and the underlying $14.6 billion FTT exposure on Alameda's balance sheet was invisible until the moment it wasn't.

AML and KYC scoring. The global anti-money-laundering software market reached $4.13 billion in 2025 and is forecast at $9.38 billion by 2030 (MarketsandMarkets). Crypto AML/KYC compliance specifically grows at a 13.8% CAGR. Vendors like ComplyAdvantage, Chainalysis Reactor, and Elliptic Navigator now use black box machine learning models for wallet-risk scoring. The use of black box machine learning here is widespread and effective enough to be deployed at most major exchanges, and opaque enough that compliance officers often cannot reconstruct why a specific wallet got blocked.

Smart-contract auditing. This is where AI's limits show up clearly. A 2024 arXiv study evaluated GPT-4 on smart-contract vulnerability detection. It hit 96.6% precision but only 37.8% recall, missing nearly two-thirds of real flaws. ChatGPT outputs are unstable across runs in 42% of contracts (ACM TOSEM 2024). Hybrid tools like GPTScan, which pair GPT with static analysis, exceed 90% precision and ~70% recall on token contracts (arXiv 2308.03314). CertiK Skynet now monitors 17,000-plus projects and roughly $494 billion in market value, but every responsible audit team still pairs the AI with a human reviewer.

Robo-advisors. Betterment manages more than $56 billion across 900,000-plus accounts. Wealthfront sits at $42.9 billion. The robo-advisor industry has crossed $1 trillion in global AUM. The portfolio rebalancing, tax-loss harvesting, and risk-scoring are all driven by ML models whose specific decisions are not disclosed in any retail-facing document.

Credit scoring and fraud detection. FICO is used by 90% of US lenders, and FICO Falcon processes over 65 billion transactions per year with reported 95%+ fraud-detection rates. A Bank of England study of 50 UK institutions in 2024 found ML credit-risk models reduced misclassifications by roughly 25% relative to logistic regression. The accuracy gain is real. The trade-off is that under CFPB Circulars 2022-03 and 2023-03, US lenders cannot use models opaque enough to prevent specific adverse-action explanations under ECOA.

The pattern across all five is the same. The model is more accurate than the transparent baseline. The opacity is structurally inseparable from the accuracy. And the regulators are catching up faster than the explainability tooling is.

Note on Blackbox AI: The Coding LLM

A quick disambiguation. When people search for "blackbox AI", they often mean the conceptual problem this article is about. They sometimes mean Blackbox AI, the company at blackbox.ai. Blackbox.ai is a coding LLM designed to transform the way developers write code. The product integrates with VS Code as a coding agent, suggests code, and competes with tools like Claude Code, GitHub Copilot, and Cursor. It is one of the better-known advanced AI technologies in the AI coding space, built on multiple AI models, and the code Blackbox suggests covers everything from refactors to test scaffolding. Blackbox integrates code generation, chat, and search into one workflow, and most users would call it the best AI assistant they have tried inside their editor.

The two meanings often get tangled in search results. The product Blackbox AI is not the subject of this article. We are looking at the structural property of opaque AI systems, not at any single coding assistant. If you searched for the product, the company maintains its own documentation and pricing. If you searched for the concept, keep reading.

Explainable AI and Explainability Tools

Explainable AI, usually shortened to XAI, is the field that tries to pry opaque AI models open without trashing their accuracy. It is also a real market now. Forecasts for 2026 land between $9 billion and $13 billion globally, with the gap depending on whose definition of XAI you accept. The goal is to make AI models more explainable without forcing teams back to slower or less accurate baselines. Smart teams run these tools on AI tools before releasing them, and they pair the output with documentation a human reviewer can actually read.

Three families of XAI technique are worth knowing.

The first is SHAP, short for SHapley Additive exPlanations. It is borrowed from cooperative game theory: for each prediction, SHAP assigns a contribution score to every input feature. Credit scoring teams love it. Fraud detection teams love it. Healthcare risk modelers tolerate it. SHAP is theoretically rigorous, but computationally it is a beast on big tabular data.

The second is LIME, Local Interpretable Model-agnostic Explanations. LIME builds a simple, interpretable surrogate model around a single prediction and uses that to explain the original. Faster than SHAP. Works on text, images, and tables. The catch is that LIME is local by design, so it can mislead you if you assume one explanation generalizes.

The third is counterfactual explanations. Instead of telling you why the model said yes, counterfactuals tell you the smallest input change that would have flipped the answer to no. That is exactly what a credit applicant or a flagged transaction wants to know: "What would I have to change?". Counterfactuals are gaining ground fast in adverse-action notices precisely because they map cleanly onto regulator expectations.

Beyond those three, you will see feature-importance plots, attention visualization for transformer layers, and Grad-CAM for image classifiers. Mechanistic interpretability, the practice of reverse-engineering specific neurons and attention circuits, sits at the bleeding edge of the field. Anthropic, OpenAI, and a handful of academic labs have published partial circuits, but the work has not yet translated into anything an enterprise compliance team can ship.

Be honest about where this all lands. Industry research published by Palo Alto Networks and others notes that XAI works well for image classifiers and structured tabular models, and only partly for LLMs. Logic inside a language model shifts as token position and context window change, so feature-attribution scores can mislead in ways the explanation itself does not warn you about. Explainability tools that share their underlying code are useful. They are not a finished solution to the black box problem.

Regulating Black Box AI: EU AI Act, NIST, CFPB

Most AI vendors did not expect regulators to move this fast. They did. The old "ship now, document later" posture is on its way out, and a small handful of rules are the reason.

Europe got there first with the EU AI Act. It is a phased rollout across 2025 to 2027, not a single switch. Prohibited practices became enforceable on 2 February 2025. The general-purpose AI rules turned on 2 August 2025. High-risk system obligations bite from 2 August 2026, and the regulated-products extension lands one year after that, on 2 August 2027. The fines are not for show. EUR 35 million or 7% of worldwide turnover for the most serious breaches, EUR 15 million or 3% for the rest (DLA Piper, 2025). And the list of high-risk use cases reads like a who's who of black box deployments: credit scoring, hiring, education, law enforcement, biometric ID. Every one of those now requires documentation, transparency, and human oversight by default.

The American picture is messier but moving in the same direction. The NIST AI Risk Management Framework is the closest thing to a US baseline. Released January 2023, expanded across 2024 and 2025, it has quietly become the document large enterprises map themselves to whether or not they technically must. December 2025 brought NIST IR 8596, the preliminary draft of the Cyber AI Profile, with a follow-up workshop on 14 January 2026. Plenty of teams are already adopting it.

The Consumer Financial Protection Bureau has been blunter. Circulars 2022-03 and 2023-03 say it directly: a creditor cannot use a complex algorithm if the complexity prevents the creditor from giving the specific reasons for an adverse action under ECOA and Regulation B. Read that carefully. It is not a ban on machine learning in lending. It is a ban on machine learning so opaque that you cannot tell a denied applicant what they did wrong. That is, in effect, a black box ban for consumer credit.

Banks face an older but still tougher requirement. Federal Reserve SR 11-7, on the books since 2011, forces banks to demonstrate they understand any model that drives a material decision. Modern deep-learning systems strain to clear that bar without help, and the OCC's Bulletin 2011-12 enforces the same approach.

Net result: any regulated entity in the US or EU has run out of excuses for treating opacity as an acceptable trade-off for accuracy. Either interpretability gets engineered in from the first design review, or you build a hybrid where a human carries the explanation the model cannot. There is no third path that survives an enforcement action.

How to Audit a Black Box AI System

So what does responsible deployment of a black box AI system actually look like in 2026? The practical playbook is shorter than vendors pretend.

You start with the data. Document where the training data came from, who labeled it, and which subgroups are represented. Roughly half of the bias problems you will hit later are already encoded here, and the half you cannot trace are the half you cannot fix.

Then you red-team the model. Probe it with adversarial inputs, prompt injections, edge cases, and out-of-distribution examples. Anthropic, OpenAI, and Microsoft now publish playbooks for this work that you can adapt without inventing new methodology.

Apply XAI on every model in production, not just the headline ones. SHAP for tabular pipelines. LIME for text and images. Counterfactuals for any decision that loops back to a user. None of those tools is perfect. Their absence, on the other hand, is a red flag for any auditor walking into your stack.

Watch for drift. Models go stale faster than most teams expect. Track input distributions, output distributions, and downstream outcomes. Set alerts on each, and treat unexplained shifts as incidents, not curiosities.

Build the escalation path before you need it. Every consequential model decision should have a human override and a documented appeal channel that a customer can actually use. If your support team is the appeal channel, write that down too.

Last, map yourself to the framework that applies. NIST AI RMF if you are in the US. EU AI Act high-risk requirements if you are in Europe. CFPB Circulars 2022-03 and 2023-03 if you touch consumer credit at all. Doing this once, early, is dramatically cheaper than retrofitting after an enforcement action lands on your desk.

You will not eliminate the black box. That is fine. The job is to make it observable, accountable, and bounded. That is the standard regulators are already enforcing, and it is what mature deployment looks like in 2026.