Digitally Anonymized in 2026 : What It Means and How to Be

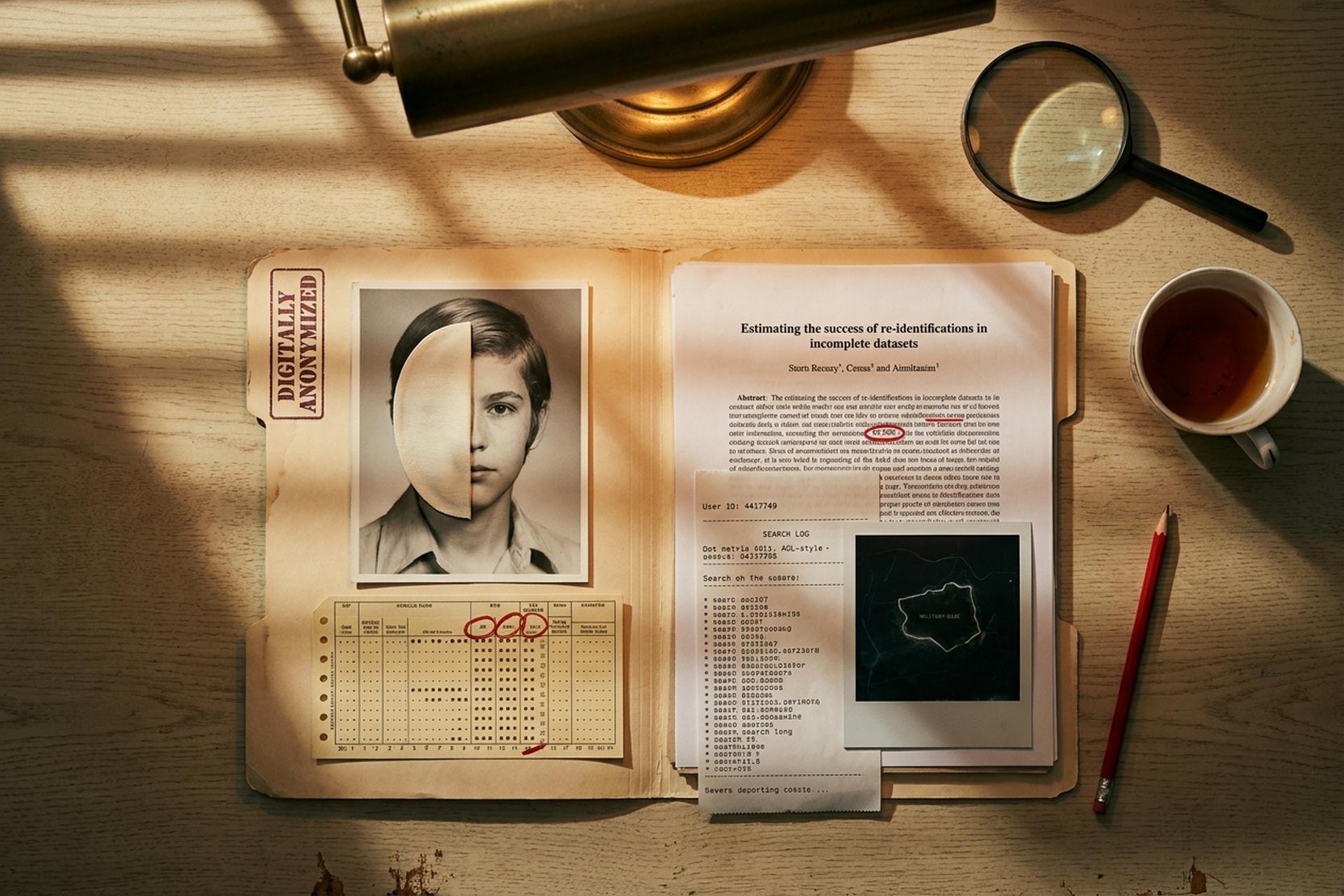

The phrase "digitally anonymized" is doing a lot of heavy lifting right now. Netflix used it this year on the opening card of a true crime documentary. The film replaced witness faces and voices with AI characters. (The British spelling "digitally anonymised" appears in the same context for UK-facing copy.) Academic researchers used the same phrase in 2019 for a dataset of 1.5 million Americans. They then re-identified 99.98% of them from just 15 simple attributes. Both claims are technically true. They are also describing radically different things — almost opposite things, depending on how you read them. So when somebody tells you a face, a record, or an entire dataset has been digitally anonymized, the only useful next question is what they actually mean, and against whom that anonymization is supposed to hold up.

What "digitally anonymized" actually means

Two distinct ideas hide behind the label. The first is surface de-identification: a blurred face, a fake name, a voice modulator, an AI avatar. It hides someone from a viewer who is not trying to dig further. The second is statistical anonymization: a record set changed so even a skilled re-identifier with public side data cannot tie a row back to a person. The first is a data privacy gesture. The second is data privacy proper. GDPR's Recital 26 captures the difference plainly. Data is only anonymous when no "means reasonably likely to be used" can re-identify it. HIPAA codes the same idea as either an 18-identifier Safe Harbor strip or an Expert Determination that the re-identification risk is "very small." ICO UK guidance, updated March 2025, calls it the motivated-intruder test. Most things sold as "digitally anonymized" pass the first test and fail the second.

How individuals get digitally anonymized in practice

Individual digital anonymity is not one switch. It is a stack. Each layer fixes one identifier and leaves the others alone. Most readers want three or four tools, not a single product labelled "anonymizer."

Network layer. Your IP address is the cheapest identifier to leak, and the easiest to hide. Tor remains the strongest network-level option, with roughly 2.5 million daily users and an infrastructure of around 8,000 volunteer relays as of mid-2025 per Tor Metrics. A commercial VPN is the lighter alternative; about 32% of US adults used one in 2025, down from 46% the year before per Security.org, and global VPN apps count roughly 147 million users. Tor handles state-level threat models. A VPN handles your ISP, employer, and the coffee-shop Wi-Fi. The two solve different problems.

Browser layer. Pick a browser whose defaults assume the network is hostile: Brave, LibreWolf, Mullvad Browser, or Tor Browser for the strongest case. Fingerprint resistance and ad blocking matter more here than a private window, which only hides local history from someone sharing your laptop.

Identity layer. Email is the single most useful identifier a tracker can collect, because it joins data brokers' profiles across services. The fix is alias-per-service via SimpleLogin (acquired by Proton in April 2022 with more than 100,000 users and 2 million aliases at that point) or addy.io. Add a per-service username and a virtual phone number for SMS verifications, and the easiest cross-site join goes dark.

Payment layer. Bitcoin is not a privacy tool any more. Chainalysis claims it can trace essentially all of the trading layer; the criminal share of on-chain volume has fallen from around 70% to roughly 20% precisely because investigators routinely deanonymize chains. Monero is the only major cryptocurrency Chainalysis publicly says it cannot trace at scale. The technical reason is the stack of CLSAG ring signatures (16-member rings: one real signer, 15 decoys), stealth addresses, and RingCT amount hiding. The price is liquidity. Binance globally delisted XMR in September 2024 and Kraken withdrew it from the European Economic Area by 31 December 2024, capping a 60-exchange delisting wave in 2024 and around 73 by mid-2025. Despite the squeeze, Monero held a market cap near $7.6 billion and a daily transaction count around 28,000 by late 2025, with the price near $411 in May 2026. Merchants who want to accept crypto without forcing buyers through KYC can use non-custodial gateways. Plisio, for example, supports 50-plus coins at a 0.5% fee, against the 2-3% merchant discount rate typical of card rails.

Device and account hygiene. No logged-in accounts in the privacy session. Separate profiles for separate identities. The stack only works if you do not undo it by signing into the same Gmail across all of them.

| Layer | What it hides | Best-in-class tool | 2025-2026 number |

|---|---|---|---|

| Network | IP, route, ISP visibility | Tor / Mullvad VPN / Proton VPN | Tor ~2.5M daily users, 147M global VPN apps |

| Browser | Fingerprint, trackers, telemetry | Brave / LibreWolf / Mullvad Browser | Brave 100M MAU (Sep 2025) |

| Identity | Email join, phone reuse | SimpleLogin / addy.io | SimpleLogin 100K+ users, 2M+ aliases |

| Payment | Spending fingerprint, KYC | Monero / Plisio non-custodial | Monero ~28K daily tx, $7.6B cap |

| Account | Cross-service linking | Per-service identities, no SSO | — |

Why "anonymized" datasets keep getting re-identified

The academic record is unflattering. Stripping names is almost never enough.

| Year | Dataset / event | Re-identification result |

|---|---|---|

| 1997 | Massachusetts GIC hospital release | Latanya Sweeney identifies Governor William Weld's record using public voter rolls |

| 2000 | 1990 US Census | Sweeney shows 87% of Americans are unique by {ZIP, DOB, sex} |

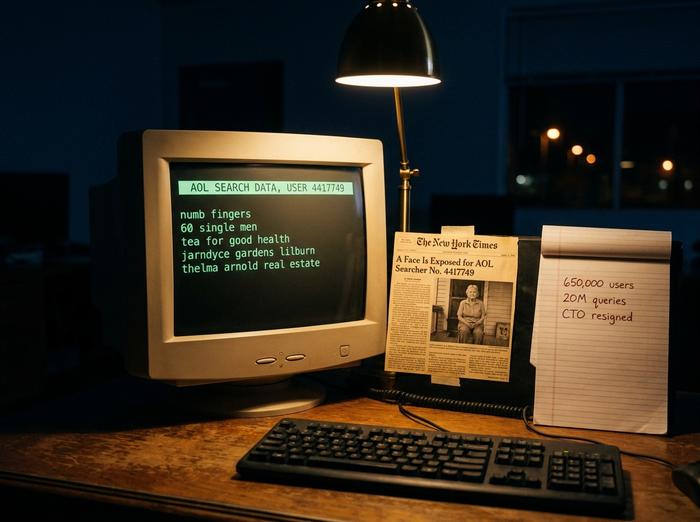

| 2006 | AOL search logs (20M queries / 650K users) | NYT identifies User 4417749 as Thelma Arnold within 5 days; CTO resigns |

| 2008 | Netflix Prize (480,189 subscribers) | Narayanan and Shmatikov: 99% of records identifiable with 8 ratings + 14-day dates |

| 2013 | 1.5M mobile phone subscribers | de Montjoye: 4 spatio-temporal points uniquely identify 95% of users |

| 2014 | NYC taxi dataset | MD5-hashed medallion numbers reversed in under 2 minutes; celebrity trips reconstructed |

| 2016 | Australian Medicare and PBS release | Re-identification of 3 sitting MPs and an AFL player within 5 weeks; dataset withdrawn |

| 2018 | Strava global heatmap | ~13 trillion GPS points exposed perimeters of military bases in Iraq, Syria, Afghanistan |

| 2019 | Rocher, Hendrickx, de Montjoye | 99.98% of Americans correctly re-identifiable from 15 demographic attributes |

| 2026 | Netflix "Investigation of Lucy Letby" | AI faces and voices applied to witnesses; visual anonymization only |

The pattern repeats. A publisher removes the obvious identifiers, claims the dataset is anonymized, and a researcher with a public auxiliary source (voter rolls, IMDB, paparazzi photos, employer directories) joins the two back together, with real identities exposed within weeks.

The AOL case in August 2006 was the first widely reported real-world re-identification, and search histories turned out to be quasi-identifiers in their own right. Thelma Arnold's queries about "numb fingers," "60 single men," and her hometown of Lilburn, Georgia were enough for two New York Times reporters to find her on the porch. Three AOL employees, including the CTO, were out of a job within weeks.

The Netflix Prize, launched in October 2006, released about 100 million ratings from 480,189 subscribers across 17,770 films. Narayanan and Shmatikov published their de-anonymization paper at IEEE S&P 2008. With as little as two ratings and a three-day date window, they could uniquely identify 68% of subscribers. With eight ratings and a fourteen-day window, the figure rose to 99%. Netflix cancelled the planned sequel in 2010 after a Doe v. Netflix lawsuit and an FTC inquiry.

The Lucy Letby documentary, released as a Netflix documentary in February 2026, is the consumer-facing version of the same lesson. The opening title card says: "Some contributors have been digitally disguised to maintain anonymity. Their names, appearances, and voices have been altered." The anonymizing technique here is generative AI rather than a blur or a silhouette, motivated in part by witnesses who needed to comply with court orders that limited their public visibility. Audience reaction split between an uncanny valley complaint about the use of AI and a defence that an AI avatar preserves human emotion better than a black box. Both miss the deeper point. The use of AI for visual anonymization does nothing about behavioral fingerprints in the testimony itself: phrasing, dates, named job roles. A motivated intruder, given anonymized data and a short candidate list, still has plenty to work with. AI has changed the look of the output. It has not changed the re-id math.

Differential privacy and the only honest anonymization

The framework that survives the de Montjoye attack class is differential privacy. Dwork, McSherry, Nissim, and Smith defined it in 2006 in their paper "Calibrating Noise to Sensitivity in Private Data Analysis." The idea is not to strip identifiers. It is to add carefully tuned noise to query results so any one person's presence or absence in the data is statistically deniable.

It comes with a quantitative privacy budget, epsilon (ε). Lower epsilon means more noise and stronger privacy. The lead-up to differential privacy was a sequence of weaker frameworks. k-anonymity, proposed by Sweeney in 2002, requires every record to look the same as at least k-1 others on the quasi-identifiers. l-diversity (Machanavajjhala et al. 2007) added a constraint on sensitive attribute diversity. t-closeness (Li et al. 2007) tightened the distribution. All three are heuristics. Only differential privacy gives a worst-case mathematical guarantee against arbitrary auxiliary data.

The deployment record is mixed. Apple announced local differential privacy at WWDC 2016, but reverse-engineering audits found its epsilon settings ranged from about 2 to 8, which privacy researchers consider weak. The US Census Bureau applied differential privacy to the 2020 release via its TopDown algorithm with a global ε around 19.61. That number drew its own criticism for being too loose, but the 2020 Census was the first national release to come with any formal privacy guarantee at all. If a "digitally anonymized" claim does not state an epsilon — or at least a k or a t — it is almost certainly the older 18-identifier-strip kind, not the formal kind.

Lucy Letby, AI avatars, and digital anonymization

The Lucy Letby documentary is the most-discussed example of "face digitally anonymized" in early 2026 for a reason. The documentary covers the British neonatal nurse convicted of seven murders, with growing questions about a possible miscarriage of justice. Netflix's choice to replace witnesses' faces and voices with AI-generated avatars carries weight beyond the case. Audience response was split. One camp called the avatars distracting, "cartoon-like," uncanny. The other defended the technique as preserving human emotion that a silhouette or voice-only treatment would have flattened.

What the debate has mostly missed is the threat model. An AI face is a UX overlay. It does not protect the source against a competent motivated intruder who already has a candidate list (other staff in the same unit at the same hospital across the same dates) and a transcript containing dates, professional roles, and turns of phrase. The Lucy Letby case, with a publicly named institution and a public timeline, has both. The narrower the source pool, the less an AI overlay buys you. That is not an argument against the technique. It is an argument for being clear about what it does and does not anonymize.

What the law requires of "digitally anonymized" claims

Three regulators set the floor in most markets. The EU's GDPR, the US HIPAA rules for health data, and the UK ICO's 2025 guidance. GDPR Recital 26 sets the "means reasonably likely" test. HIPAA offers either a Safe Harbor strip of 18 specified identifiers or an Expert Determination opinion that residual re-identification risk is "very small." ICO UK reaffirmed the motivated-intruder test in March 2025.

The biggest legal shift in the past year came from the Court of Justice of the European Union. In Case C-413/23, EDPS v SRB, decided on 4 September 2025, the CJEU adopted a relative theory of personal data. The same record can be pseudonymous in one party's hands and anonymous in another's, based on what each side can reasonably know. That is a meaningful pivot. The pre-2025 default, pushed by de Montjoye and others, was that rich data is always personal data because re-id capacity has no real limit. The 2025 ruling says the call is contextual. Both views can coexist; the practical effect is more room for downstream parties to argue their copy of a dataset is anonymous even if the original publisher's copy was not.

Checklist: is the data actually digitally anonymized?

Five questions to run before you take the label seriously:

1. Which identifiers were removed? Names alone are not enough. Demographics, timestamps, and rare attributes survive every Safe Harbor strip and remain identifiable information.

2. What auxiliary data is reasonably available? Voter rolls, IMDB, paparazzi photos, employer directories. Anything joinable counts.

3. Is there a formal guarantee? A k-anonymity parameter, a t-closeness number, or a differential-privacy epsilon. No number, no guarantee.

4. Who validated the claim? An internal team or an external auditor against a defined motivated-intruder threat model.

5. What is the recourse if re-identification happens? A digitally anonymized dataset that turns out not to be is a breach, not a press release.

The honest reading of "digitally anonymized" in 2026 is that it covers two unrelated things at once. As a UX promise (we will not show your face) it is fine, occasionally elegant, sometimes badly executed. As a statistical claim (this dataset is anonymous) it is almost always insufficient without a formal guarantee. Build the individual stack with the assumption that the label is doing only half the work it implies. Demand the math when the label is on someone else's data.