Data Collection Methods: Primary, Secondary, and 2026 Tools

Data collection methods are in a strange place right now. The textbook side of the field — primary versus secondary, quantitative versus qualitative — looks roughly the same as it did twenty years ago. The implementation side has been rebuilt three times in the last five. Apple's Intelligent Tracking Prevention broke a meaningful chunk of web analytics. Google's Privacy Sandbox was quietly retired in April 2025 once the Topics API only reached 13% of Chrome page loads, with third-party cookies staying on by default. AI scrapers chewed through the public web faster than publishers could throttle them. The choice for anyone writing about this in 2026 is to either teach the toolkit that exists or to teach the one that worked in 2019. This piece picks the first.

What data collection methods actually are

A data collection method is a procedure for gathering information aimed at a specific research question. Two axes organize the whole field. The first is primary versus secondary. Primary data is collected first-hand for your own question. Secondary data is data that already exists and you re-use. The second axis is quantitative versus qualitative. Quantitative data is countable and statistical: numbers, counts, ratings, timestamps. Qualitative data is interpretive: words, themes, observations, transcripts. Real research designs usually mix the two on purpose. A survey with a 1-5 rating plus a free-text "why" is the most common mixed-methods instrument in the wild.

Primary data collection methods used in 2026

Seven core types of data collection cover almost everything on the primary side. Each method has a strength, a cost profile, and a 2026 default tool. Sampling methods (random, stratified, convenience, clustered) sit underneath them as the design choice that decides whether the data collected generalises.

| Method | Best for | Typical tool | 2026 anchor |

|---|---|---|---|

| Surveys / questionnaires | Scale, ratings, segmentation | Qualtrics, SurveyMonkey, Typeform | Online dominates; mobile-first |

| Interviews | Depth, motivation, edge cases | Zoom, Microsoft Teams + Otter.ai | Asynchronous tools rising |

| Focus groups | Group dynamics, concept testing | Recollective, Discuss.io | ~$5,000-$9,000 per session (Twilio) |

| Observation | Real behavior in context | Field notes, video, screen recording | Ethnography lives, less popular |

| Experiments | Causal inference | A/B testing platforms (Optimizely, GrowthBook) | Holdout discipline matters more |

| Documents / records | Existing organizational text | Sharepoint, support transcripts | LLM-assisted analysis common |

| Mobile data collection | Field studies, low-connectivity work | SurveyCTO, KoboToolbox | Offline-first remains essential |

Surveys and questionnaires still do the heaviest lifting. They scale. They segment. They are the only practical way to ask 10,000 people the same question. The trick is question design, not the platform. A poorly worded questionnaire produces noise no respondent can rescue.

Interviews sit on the depth axis. Structured ones use a fixed script. Semi-structured ones use a script but allow follow-ups. Unstructured ones look like a guided conversation. Twenty hours of high-quality interviews can shape product strategy as well as a survey of 1,000. Very different evidence, same decision.

Focus groups remain useful for group-driven topics like packaging, brand reactions, and taboo subjects. Their usage decayed when remote made one-to-one interviews so cheap. A skilled moderator running a focus group can surface contradictions a one-to-one interview misses. Twilio puts the typical cost at $5,000 to $9,000 per session, which is why market research budgets reserve them for high-stakes decisions.

Observation is what you do when self-reported behavior lies. Which is most of the time. Participant observation, the ethnographic tradition, is expensive and slow but the only way to capture what people actually do in context. Non-participant observation is cheaper and more limited.

Experiments are still the gold standard for causal claims. A/B tests on a web product. Controlled trials in a clinical setting. Quasi-experiments where random assignment is impossible. The discipline that breaks most experiments in business: small sample size and peeking at the metric before the test ends.

Documents and records include internal logs, customer-service transcripts, support tickets, sales notes. Modern LLM workflows make analysing this kind of raw text far cheaper than five years ago. Customer-experience teams now treat ticket archives as a primary collection source again, after years of writing them off.

Mobile data collection matters in field research, NGO work, and emerging-market surveys where connectivity is patchy. SurveyCTO and KoboToolbox are the established platforms. Offline-first design is the non-negotiable feature.

Secondary data collection methods and sources

Secondary data is the other half of the field. Re-use, not first collection. Sources of secondary data range across open government datasets, statistical agencies, syndicated panels from Kantar and Nielsen, internal data lakes, point-of-sale archives, census data, and the open web. The boom area sits in web scraping. Bright Data and Apify run multi-billion-dollar businesses on legitimate uses: price intelligence, brand monitoring, academic research. And, increasingly, AI training corpora.

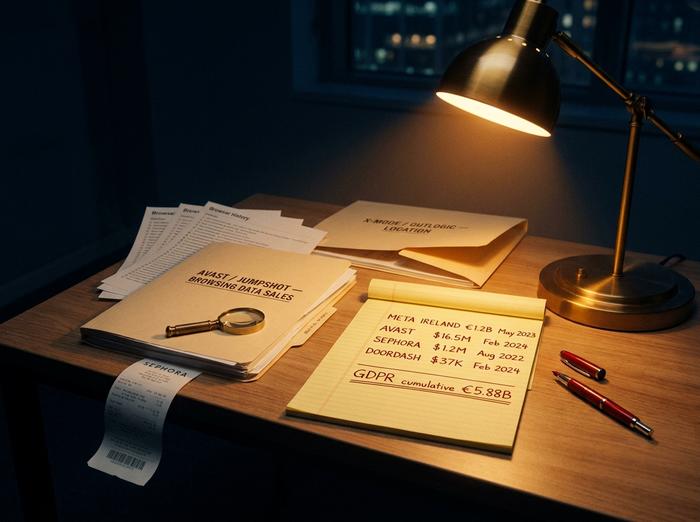

The legal floor moved most here, too. In February 2024 the FTC fined antivirus vendor Avast $16.5 million for harvesting browsing data through its security tools and reselling it via a subsidiary called Jumpshot. The same regulator ordered X-Mode and Outlogic in January 2024 to stop selling sensitive location data, a first-of-its-kind action. The Authors Guild and the New York Times both filed against OpenAI in 2023 over training-data use. Both cases remain active in 2026. Secondary collection used to feel free. It is not free anymore.

Quantitative vs qualitative data collection

The classic cut. Quantitative methods produce numbers you can run statistics on: surveys at scale, A/B tests, telemetry events, transactional logs. Statistical methods then analyze data into trends, correlations, and confidence intervals. Qualitative research methods produce text and meaning you have to interpret: interviews, open-ended survey responses, ethnographic field notes. The data collected from each side complements the other. Most useful research mixes the two. A Net Promoter Score gives a number that's easy to track. The free-text "why did you give that score" attached to it gives you the reason the number moved. Run either alone and you miss half the story.

Two practical rules. If you can pre-write the answer categories and just need scale, quantitative wins. If you cannot yet describe what you are looking for — and that is more common than people admit — qualitative comes first. Then the quantitative work measures whatever the qualitative work surfaced.

How businesses collect data in 2026

The business stack is where data collection looks nothing like the textbook. Five layers cover most of what a modern company runs.

| Layer | Function | Typical vendor | 2025-2026 anchor |

|---|---|---|---|

| CRM | First-party customer records | Salesforce, HubSpot, MS Dynamics 365 | Salesforce ~21% of global CRM market |

| Web / app analytics | Behavioral telemetry | GA4, Plausible, Adobe Analytics | GA4 universal after UA sunset (Jul 2023) |

| Server-side tracking | First-party identifiers after ITP | Server-side GTM, RudderStack, Segment | Default infrastructure after Apple ITP |

| CDP | Unified customer profile | Twilio Segment, Tealium, mParticle | Market ~$2B (2024) → ~$7B by 2028 |

| IoT / telemetry | Device events | AWS IoT, Azure IoT Hub | ~18.8B connected devices (end-2024) |

CRM is where first-party customer data lives. Salesforce holds roughly a fifth of the global CRM market. HubSpot leads the SMB segment. Microsoft Dynamics 365 is strong inside enterprises already buying Microsoft 365. The CRM is also where regulated data tends to land first, which is why GDPR enforcement keeps showing up there.

Web and app analytics moved decisively to Google Analytics 4 after Universal Analytics was shut off in July 2023. Privacy-leaning teams use Plausible or Fathom. Less data, less reporting power. Adobe Analytics still owns the enterprise corner.

Server-side tracking is the most under-discussed shift of the past three years. Apple's ITP and browser-level fingerprint protection broke client-side cookies hard. So vendors moved the tracking layer behind their own domain. Safari and Firefox cannot strip IDs there as well. Server-side Google Tag Manager and RudderStack are the default plumbing.

Customer data platforms unify records from CRM, web, app, and email into one profile per customer. Statista pegs the CDP market at roughly $2 billion in 2024, headed for $7 billion by 2028. Twilio Segment, Tealium, and mParticle anchor the category.

IoT and telemetry is the layer most articles skip and shouldn't. IoT Analytics counted about 18.8 billion connected IoT devices globally at the end of 2024. The number is projected toward 40 billion by 2030. Every one of them collects data on something: energy use, location, temperature, motion, occupancy. The EU Data Act, effective 12 September 2025, gives users portability rights over the data those devices generate.

Two newer categories sit alongside the stack. Zero-party data, where users volunteer preferences directly through preference centers, quizzes, and profile fields, surged after Privacy Sandbox failed. Brands realized the post-cookie future had not actually arrived and that asking people might be simpler than guessing. AI training corpora are the most contested form of large-scale collection right now. The UK High Court ruled on 4 November 2025 in Getty Images v Stability AI that AI model weights are not "copies" under the Copyright, Designs and Patents Act. Getty had already dropped its primary infringement claims mid-trial. AI-training collection won that round, narrowly.

Privacy, ethics, and the legal floor for collection

By 2026 three legal floors matter for most companies running collection. GDPR in the EU. CCPA and CPRA in California. And the FTC at the US federal level, leaning hard on its consumer-protection role because there is still no federal privacy law on the books. CMS Law's enforcement tracker says cumulative GDPR fines passed €5.88 billion by the end of 2024. Meta Ireland's €1.2 billion penalty from May 2023, over unlawful EU-to-US data transfers, sits at the top of that pile. Right below it: a €405 million Instagram fine on children's data from 2022.

California enforcement adds up to less in dollars but more in tempo. The regulator there picks smaller cases and resolves them faster. Sephora paid $1.2 million in August 2022 for selling personal information without an opt-out. DoorDash followed in February 2024 with a $375,000 settlement over the same kind of failure. Both cases show that "do not sell my personal information" carries weight in practice, and the agency leans on everyday breaches rather than headline-grabbing ones.

On the federal side, the FTC stayed busy through 2024. Avast paid $16.5 million in February for collecting browsing data through its antivirus product and reselling it via a subsidiary. In January, X-Mode and Outlogic both got first-of-its-kind orders barring the sale of sensitive location data. The Drizly order from October 2022 went further: it named the chief executive personally, signalling that breach response now lands on people at the top, not only on the company.

AI-training collection is the corner of all this that is still being written. The New York Times sued OpenAI on 27 December 2023. The Authors Guild had filed three months earlier, in September 2023, and both cases were still active in 2026. Getty v Stability AI then produced a UK High Court ruling on 4 November 2025 that cut against the rights holder. The court found AI model weights are not "copies" under the Copyright, Designs and Patents Act. Getty had already dropped its main infringement claims mid-trial. A LinkedIn class action filed on 21 January 2025 was voluntarily dismissed nine days later. The claim: AI training on private InMail messages. The proof: LinkedIn showed the data had not been used to train any model. The pattern so far is that AI-training collection is hard to litigate, no matter how bad the optics look.

One figure that keeps appearing in industry decks deserves a correction here. The mistake matters when readers cite it back. TikTok's 2019 COPPA settlement, against the Musical.ly entity, was $5.7 million. Not the $5.9 billion some decks still print. The newer DOJ and FTC complaint filed on 2 August 2024 separately seeks up to $51,744 per day per violation, and it is still pending in 2026.

I'm not convinced any of this gets simpler over the next year. The pragmatic shorthand for 2026: any new collection pipeline needs a privacy review before the data lands, not after. Dark-pattern enforcement is climbing under the EU Digital Services Act. Consent banners now get audited against EDPB guidance. And the motivated-intruder test from the UK ICO's March 2025 update applies to anything labelled "anonymized."

Choosing the right data collection method

The choice of data collection method is the most consequential step in the entire research process. The decision tree is short. Start with the research question. Not the tool.

If the question is "how many," go quantitative: survey, telemetry, transactional log. If the question is "why," go qualitative: interviews or open-ended responses. If it is "what is going on here that I don't yet understand," go observational. If you need both depth and scale, design a mixed-methods instrument up front. Budget twice the analysis time you think you need.

Three constraints check the choice. The ethics and legal floor: what jurisdictions does your audience sit in, and what consent and retention rules apply? The budget: focus groups at $5,000-$9,000 a session are not the right move for an exploratory question that two days of interviews would answer. The time horizon: large-N surveys take two to four weeks for a clean run, ethnography takes months, telemetry is real time but assumes the instrumentation already exists.

So: the academic taxonomy of methods has not changed in twenty years. The business stack that runs those methods has been rewritten three times in five. The legal floor moved twice in the past eighteen months. Pick the method for the question. Then assume the data-collection plan needs a privacy review before, not after, the first record lands.