Undetectable AI: ChatGPT Humanizer vs AI Detector Tools

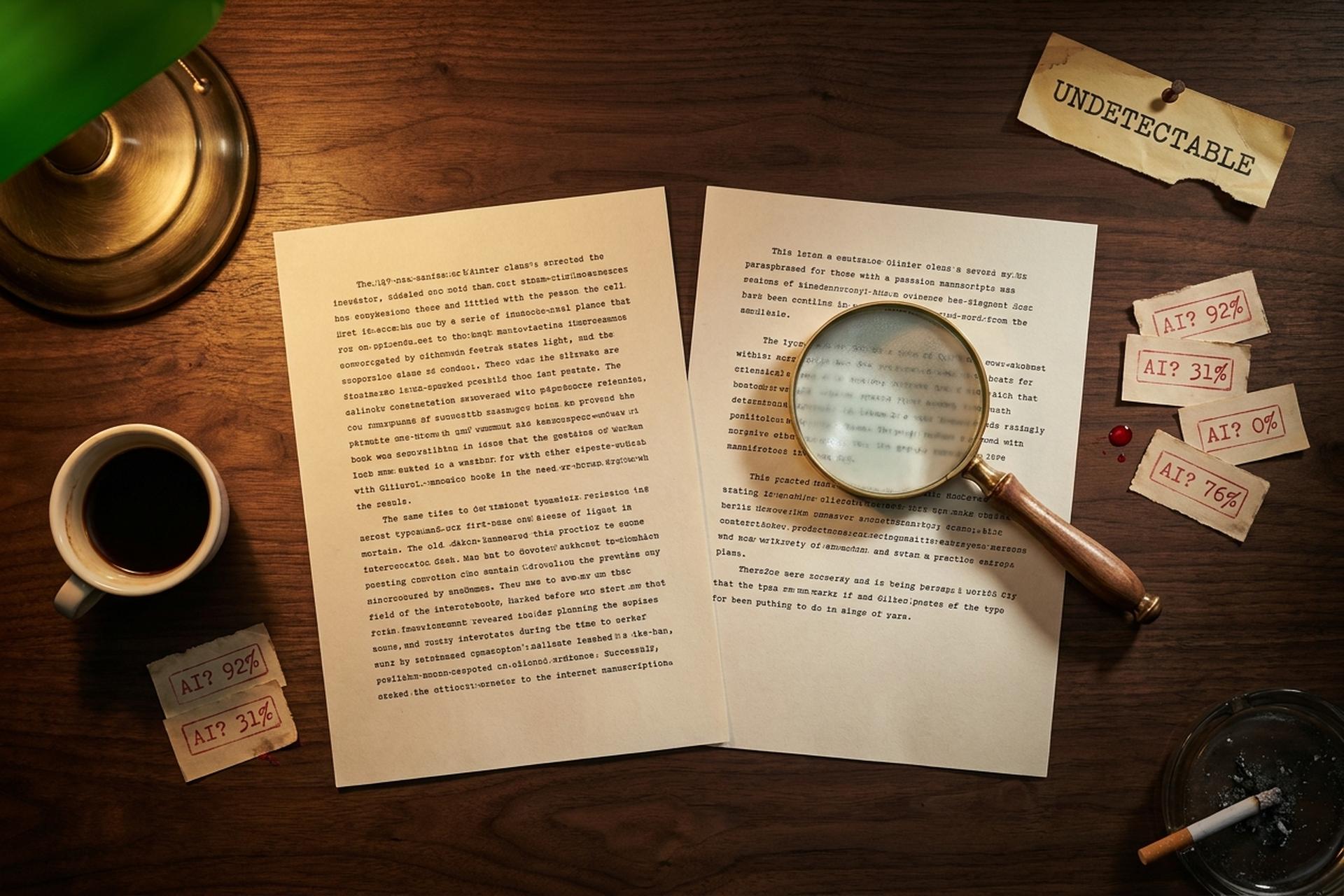

A teacher pastes a student's essay into Turnitin. The score comes back: 92% AI-generated. The student swears they wrote it. Both could be right. Both could be wrong. Welcome to the messy, billion-dollar arms race over who actually wrote anything online in 2026.

The keyword "undetectable AI" sits at the centre of that fight. It refers to a small but fast-growing category of products called AI humanizers. These tools take ChatGPT or Gemini output and rewrite it. The goal is to make detectors like Turnitin, GPTZero, and Originality.ai stop flagging it as machine-written. Twenty-plus companies operate in the space. The biggest brand, Undetectable.ai, claims 11 million users on a bootstrapped, 34-person team. The detectors on the other side process hundreds of millions of submissions a year. As a 2025 FTC settlement showed, both sides have a habit of overselling what their software can actually do. This guide walks through every layer. What undetectable AI tools are. How detectors work. The market right now. Why some bypass attempts succeed and others fail. The false-positive scandals making courts and universities skeptical of detection. And the ethical line that's easy to cross.

What is undetectable AI? The humanizer category explained

"Undetectable AI" is shorthand for a piece of software that rewrites AI-generated content. The goal: stop scoring as AI on detection tools. These products go by a few names. AI humanizers. AI bypassers. Anti-detection rewriters. Most sell themselves as a bypass tool for academic and SEO writing. They sit between you and the AI checkers. You paste text from ChatGPT into the tool. The humanizer paraphrases it. The new version is supposed to pass AI detection tools like Turnitin, GPTZero, Copyleaks, or ZeroGPT. Vendors call this the bypass-detected-by-ai workflow.

The category exploded in 2023, after ChatGPT's launch made AI-generated writing trivially easy to produce and AI detection models trivially easy to find. Within a year, dozens of humanizer products were live. Most are thin paraphraser layers built on top of open-source language models. The good ones train an undetectable AI humanizer on human text and on the failure modes of specific detectors. The bad ones just shuffle synonyms and break sentences.

The use cases people advertise are broad. Content creators and marketers humanize ai generated blog drafts to keep SEO traffic from search engines without the writing styles sounding robotic. Non-native English writers run their drafts through a free AI humanizer to soften phrasing into more natural writing. Academic users (the controversial group) use them to disguise unauthorized AI use, sometimes near plagiarism territory. Customer support teams sometimes use them to turn AI output into something more human-like and conversational, like something a person would actually say. The line between legitimate editing and academic cheating is exactly where most of the policy fights are happening, and how undetectable AI could be used in either context is the heart of the controversy. Vendors pitch features of undetectable AI tools as boring productivity, while critics see them as cheating infrastructure.

| Common term | What it means |

|---|---|

| AI humanizer | Tool that rewrites AI text to sound human |

| AI bypasser / detector bypass tool | Same product, framed against detectors |

| Anti-detection rewriter | Same product, framed for SEO use |

| AI detector | Tool that flags AI-generated text |

| Watermark | Statistical signal embedded in AI output |

| Provenance / Content Credentials | Cryptographic record of where content came from (C2PA) |

How AI detectors flag and rewrite ChatGPT text

To bypass a detector, you have to know what it looks for. Modern AI detectors lean on a handful of signals that tend to separate machine writing from human writing.

Perplexity is the most quoted one. GPTZero, the consumer-facing detector that launched in early 2023, calls perplexity its "surprise meter." Language models pick the most probable next word. Predictable, low-perplexity text reads as machine-generated. Humans, especially when they get bored or frustrated mid-sentence, drop in odd word choices that ramp the perplexity up.

Then there's burstiness. Human writing tends to vary widely in sentence length and complexity across a paragraph. A short fragment. Then a long meandering sentence with three clauses. Then a punchy four-word sentence. LLM output is more uniform: sentences cluster around 14 to 22 words and stay that way. Detectors measure that variance.

N-gram frequency comes in next. Specific phrases ("delve into," "vibrant tapestry," "in today's rapidly evolving landscape") appear far more often after 2023 than they did before. Detectors maintain pattern libraries of these AI-tells, and bigger libraries get updated constantly.

And finally, a fine-tuned neural classifier. Most modern content detection tools stack a BERT or RoBERTa-class model on top of the stats. These machine learning algorithms are designed to identify ai-written passages versus human ones. They're trained on labeled human and AI text. The output is a probability score for content generated by AI. GPTZero now bundles seven separate components. Stylometric profiles. Live web search. Sentence structure analysis. Patterns of length and complexity all feed into the score.

Some detectors also look for watermarks. Google's SynthID embeds a statistical signal in Gemini text. OpenAI has internally validated a watermark for ChatGPT (Wall Street Journal, August 2024) but hasn't shipped it. According to OpenAI's own user survey, around 30% of ChatGPT users said they'd use the product less if their output were watermarked. Image watermarking is further along: OpenAI joined C2PA in May 2024 and now attaches Content Credentials to DALL-E 3 outputs by default.

How undetectable AI works under the hood

Humanizers attack the same signals detectors look at, but in reverse. Each tool is designed to undo what the detector flagged. The marketing pitch is always some variant of "an undetectable AI is an advanced rewriter that turns AI into natural-sounding writing." Vendors compete to claim they're among the most accurate AI rewriters or the most accurate AI detection tools, depending on which side of the arms race they're on.

A typical pipeline starts simple. Run the input through an online AI paraphraser. The model is fine-tuned on human writing. It raises perplexity by injecting unexpected word choices to humanize the text. It varies sentence structure to break burstiness uniformity. It swaps flagged n-grams for less common phrasing. Vendors claim their tool can transform AI output to sound more human. They claim it slips past undetectable by AI detectors checks while it maintains its original meaning. Does it actually deliver high quality content? That varies wildly between products. Some pitch the tool as making your text undetectable in one click. The marketing copy and the writing undetectable claim are not always met by the actual output.

The University of Maryland published the strongest theoretical paper on this in 2023. The team was led by Soheil Feizi. Their preprint "Can AI-Generated Text be Reliably Detected?" (arXiv:2303.11156) made one big claim. A lightweight neural paraphraser placed on top of a language model defeats every detection method. Watermarking. Neural classifiers. Zero-shot detection. All of them. Feizi's quote in the UMD news release was direct: "We should just get used to the fact that we won't be able to reliably tell if a document is either written by AI or by humans."

Better humanizers go further. They train against specific detectors. The product team takes a dataset of AI text from ChatGPT, runs it through Turnitin or GPTZero, and trains the paraphraser to minimize whatever score the detector produces. The goal is to make AI text sound human enough to slip past the classifier and pass AI detection. This is essentially adversarial training in reverse. The user gets one of many AI writing tools optimized to beat one specific opponent, and the marketing pitch for each one is some version of "undetectable AI rewrites your draft into something that won't get detected by AI checkers." Vendors say the rewrite turns content undetectable and often advertise that the result passes AI detection consistently. It's also why bypass rates differ wildly across detectors for the same humanizer output. Practically, undetectable AI helps reduce the score, but rarely zeroes it. Marketing claims that the tool transforms ai text into natural-sounding human writing usually overstate the consistency.

The trade-off is quality. User reviews on DitchNet and Reddit's r/WritingWithAI flag the same complaint over and over. Humanizers tend to inject filler. "I think." "From my experience." Phrases like that get jammed in where they don't fit. Sentence connections break. Some passes flatten brand voice. One reviewer rated free-tier output "about 5 out of 10 for public-facing content." A humanizer can drop a detector score from 99% to 50%. But if the writing then reads strangely, the gain is academic.

The market: Undetectable.ai, BypassGPT, QuillBot, and more

The market leader is Undetectable.ai. Here, AI is a tool, not just a service. The platform combines an undetectable AI humanizer, a free detector, and a Chrome extension. The company was founded in January 2023. Founders: Christian Perry, Devan Leos, and Bars Juhasz. Ben Miller joined later as COO. The legal HQ is at 1309 Coffeen Avenue in Sheridan, Wyoming. Press releases also list a Boise, Idaho base. Undetectable.ai is bootstrapped. No disclosed venture funding. Per PR Newswire, the company hit 11 million users by November 2024. That's 18 months after launch. GetLatka pegged Undetectable.ai at $3.7M ARR in September 2025. About 34 employees. Tracxn flagged an unconfirmed M&A offer in April 2025.

The competitive set is wide and price-segmented:

| Tool | Founder / parent | Entry plan | Top plan | Notable |

|---|---|---|---|---|

| Undetectable.ai | Christian Perry | $9.99/mo | Unlimited | 11M users (Nov 2024) |

| StealthGPT | Jozef Gherman | $14.99/mo | $29.99/mo + $4.99 add-on | $2.2M revenue (Dec 2023) |

| BypassGPT | HIX.AI | $6.99/mo | $29.99/mo | Limited free tier |

| HIX Bypass | HIX.AI | Free 20 credits | $49.99/mo unlimited | Premium positioning |

| QuillBot Humanizer | Learneo (parent of Course Hero) | $4.17/mo (annual) | — | 50M+ users across QuillBot suite |

| Phrasly | independent | Free 550 words | $12.99/mo unlimited | Annual billing |

| Walter Writes AI | independent | ~$13/mo (annual) | ~$25/mo | Premium positioning |

Undetectable.ai positions itself as a one-stop shop with both detection and humanization in the same dashboard. Its detector claims 99% accuracy and "100% detection in peer-reviewed studies." Its humanizer pitches multi-detector scoring, which means it tests output against eight or so detector models simultaneously before returning a result. The Chrome extension and 50-language coverage are real differentiators.

The category leader on the AI-content-creation side is QuillBot, owned by Learneo (the same parent as Course Hero). QuillBot's broader writing suite is used by 50 million-plus people, and the AI Humanizer is one of dozens of features. The AI Detector counterpart inside QuillBot supports up to 1,200 words per scan free, six scans per day. Both products are popular with students, which is exactly why universities now monitor QuillBot use specifically.

The market math is small relative to its visibility. Generative AI as a whole was a $59 billion category in 2025 (Statista). The AI detector market alone is much smaller. Per MarketsandMarkets, roughly $0.58 billion in 2025. Projected to reach $2.06 billion by 2030. The humanizer side is smaller still and more fragmented. No aggregated figure exists. A bottom-up estimate based on disclosed revenues across 30 tracked tools puts the entire sector at $50 million to $150 million in annual recurring revenue.

Do AI humanizers actually bypass detectors?

The short answer: sometimes, against some detectors, with significant quality cost.

The longer answer comes from independent testing. Originality.ai, itself a detector vendor, ran a controlled experiment on Undetectable.ai's humanizer. Both the original ChatGPT text and the humanized version scored 100% AI on Originality.ai, with equal confidence. Writer.com showed barely any movement (6% to 3%). GPTZero dropped from 100% to 91%. The bypass effect was marginal at best on the strongest detectors.

A more thorough 2026 review at Aithor produced this table running Undetectable.ai's output through four detectors:

| Detector | Original AI score | After humanizer | Result |

|---|---|---|---|

| GPTZero | 97% | 72% | Partially bypassed |

| Originality.ai | 99% | 81% | Not bypassed |

| Copyleaks | Flagged | Flagged | Not bypassed |

| ZeroGPT | 94% | 61% | Partially bypassed |

The pattern is consistent across reviews. ZeroGPT and GPTZero are easier to drop. Originality.ai and Copyleaks tend to hold. That's not a coincidence. Originality.ai is built specifically for adversarial paraphrased text, and its internal benchmarks (published in Pangram's January 2026 JAIT paper) show roughly 97% detection on QuillBot-paraphrased samples.

Vendor accuracy claims rarely survive contact with independent testing.

| Detector | Vendor claim | Independent testing |

|---|---|---|

| Turnitin | 98% accuracy, <1% false positive | ~85% recall (admitted by Turnitin's own product officer); flagged as ai-generated cases overstated |

| Originality.ai | "Industry leader" | Strong on raw AI, drops on adversarial |

| Copyleaks | 99.12% | ~50% on QuillBot-paraphrased text |

| GPTZero | "Multilayer 7-component" | 1-2% false positive on pre-AI essays |

| Winston AI | 99.98% | Variable: 100% on blog post, 3% on e-book sample |

| OpenAI Classifier | n/a | 26% recall when shut down July 2023 |

No detector is perfect across these conditions. Vendors that claim otherwise tend to publish results from narrow benchmarks against undetectable content generated by ai under controlled prompts.

OpenAI's own AI Classifier is the most damning case. The company launched it in January 2023, then quietly shut it down on July 20 the same year. The reason: 26% true-positive rate. OpenAI itself admitted the model was "unreliable." It hasn't released a replacement. Its watermarking research, internally validated at 99.9% accuracy per the WSJ report, sits unreleased two-and-a-half years later.

False positives: when AI detectors flag human writing

The bigger story in 2024-2026 is that AI detectors fail badly in the other direction too.

Stanford's James Zou and his team published "GPT detectors are biased against non-native English writers" in Patterns (July 2023, arXiv:2304.02819). They ran TOEFL essays from non-native English students through seven major detectors. The detectors flagged 61.22% of those essays as AI-generated. The same detectors flagged near-zero percent of essays written by US-born 8th graders. The bias has a simple technical reason. Lower lexical diversity and simpler syntax in second-language English look "AI-like" to perplexity-based scorers. The harm is concrete. It falls hardest on international students at exactly the institutions deploying these tools.

Common Sense Media's 2024 report on AI-detection harms widened the picture. Around 10% of teens overall reported being falsely accused of using AI. The number rose to 20% among Black teens, versus 7% for white students and 10% for Latino students. The disparate impact maps onto known bias in the underlying language models, plus how teachers respond when a tool flags a student.

The most public early disaster was Texas A&M-Commerce in May 2023. Agriculture instructor Dr. Jared Mumm pasted student essays into ChatGPT. He asked the model if it had written them. ChatGPT said yes to all of them. (It was helpful, as ever.) Mumm then failed half the class. The university reversed course days later. Students used Google Docs version history to prove they'd written the essays themselves. The Washington Post, NBC News, Rolling Stone, and Inside Higher Ed all covered the story.

Bigger institutions started disabling Turnitin's AI detection feature outright. UCLA, UC San Diego, Cal State LA, and Vanderbilt all turned it off. They cited false positives and disparate impact on international students. The California State University system alone spent $1.1 million on AI-detection software in 2024-25. Total California public-system spend topped $15 million.

Then in August 2025, the FTC dropped the hammer. Workado is the renamed entity that owned Content at Scale's "AI Content Detector." The company had advertised 98% accuracy. FTC investigators found the actual accuracy on general-purpose content was 53%. The model had been trained on academic prose only. It degraded badly on anything else. The August 28, 2025 consent order required Workado to stop the unsupported claims. The order also required FTC-drafted notices to existing customers. It was the first FTC enforcement action against an AI-detection vendor for false advertising.

The ethical line: when humanize AI text becomes risky

Most uses of an AI humanizer are legal. Most are not cheating either. It depends on context.

Legitimate use looks like this. A small business owner runs a ChatGPT-drafted blog post through a humanizer. They want to soften the corporate tone. Then they edit it before publishing. A non-native English writer uses a humanizer like they'd use a grammar checker. The goal is to refine phrasing without changing meaning. A marketing team paraphrases internal product copy. None of these violate a policy or a contract. None pretend the work is something it isn't.

The risky use is academic. Most universities prohibit unauthorized AI use in coursework. A growing number now ban AI humanizers specifically. Turnitin's August 2025 update added a counter-bypass feature aimed at the most common humanizer patterns. Submitting humanized AI text into an assignment that requires original work is academic dishonesty. That holds under most institutional policies. It holds whether the detector catches you or not. The dishonesty is the deception about authorship. The bypass is just the method.

Commercial publishing is the murkier middle. The New York Times cut ties with freelance critic Alex Preston in January 2026. An investigation found AI-generated paragraphs in his book reviews, paraphrased from a Guardian piece. The Washington Post had its own incident in December 2025. An internal AI-podcast feature shipped fabricated quotes. Semafor's investigation broke the story. Neither newsroom prohibits AI use entirely. Both prohibit undisclosed AI use that the audience would reasonably expect to be human-written.

A safer ethical default looks like this. If the audience would care that the text was AI-assisted, disclose it. If the assignment forbids AI use, don't run the output through a humanizer to disguise the fact. No truly undetectable AI tool helps you avoid that ethical question. Even when undetectable AI can help with phrasing. If you're using a humanizer to sound less corporate or to fix L2 phrasing in your own draft, you're closer to the editing end of the spectrum for academic and professional contexts. Most policies have less to say about that.

The policy direction is shifting toward provenance over detection. C2PA stands for Coalition for Content Provenance and Authenticity. It embeds cryptographic Content Credentials in images and video. OpenAI joined the steering committee in May 2024. The company now attaches credentials by default to DALL-E 3 outputs. Adobe, Microsoft, Google, BBC, NYT, and Sony are all members. The C2PA spec is being fast-tracked as an ISO standard. For text, the equivalent watermarking standards remain unsolved at scale. Until they ship, the bypass-vs-detection arms race continues.