Unstable Diffusion AI: NSFW Uncensored Stable Diffusion Fork

If you have searched for "Unstable Diffusion AI" in the last year, you have probably hit a wall of contradictory pages. Some describe a Discord community that ballooned from 50,000 members in November 2022 to roughly 97,000 by December and then to over 300,000 on third-party listings by 2025. Others describe a slick paid web app with a free tier and four named models. The confusing part is that both are real, both share the name, and both started from the same idea: take Stable Diffusion, strip the safety layer, let the internet generate whatever it wants.

This guide untangles the two. We cover what Unstable Diffusion AI actually is in 2026, how it relates to Stable Diffusion from Stability AI, the December 2022 Kickstarter ban story, the model lineup, the pricing, the still-open ethical questions, and the alternatives that have already eclipsed it. The goal is the kind of explanation a curious outsider can read once and understand, not a hype piece.

What Unstable Diffusion AI Actually Is in 2026

The name "Unstable Diffusion" covers a few overlapping things, and people mix them up constantly. The original is a community that started as a Reddit thread in August 2022 and migrated almost immediately to Discord, where it became a hub for uncensored Stable Diffusion outputs and fine-tuned weights. The public faces of the project are CEO Arman Chaudhry and co-admin AshleyEvelyn, working under the parent company Equilibrium AI.

That Discord community spun off a paid web platform at unstability.ai, which today sells subscription access to four named in-house fine-tunes (Merlin, Echo, Izanagi, Pan) trained on a curated dataset of more than 30 million adult images. It is the same project, not a clone. Confusion comes from a separate BasedLabs storefront that surfaces unstability.ai under the "Unstable Diffusion" brand on its tools directory, which makes both names show up in the same search results.

A third use of the name is loose and journalistic: any open-source Stable Diffusion fork or fine-tune capable of NSFW output, regardless of who made it. That third meaning has become less useful as the wider community has moved on to checkpoints like Pony Diffusion V6 XL and FLUX-based fine-tunes that have nothing structurally to do with the Unstable Diffusion team. Throughout this guide we will be specific about which Unstable Diffusion is meant whenever a number, price, or model name only applies to one of them.

Unstable vs Stable Diffusion: Two Models

Quick refresher first. Stable Diffusion is a text-to-image model open-sourced in August 2022 by Stability AI, CompVis, and Runway. It's a diffusion model. That means it starts with random noise and slowly denoises into a real image, guided by your text prompt. Version 1.4 ran on a regular consumer GPU with 10 GB of VRAM. The license was permissive. That openness is why the model spread so fast, and why every fork, including Unstable Diffusion, exists at all.

Out of the box Stable Diffusion shipped with a CLIP-based NSFW filter and a training set that, by Stability AI's own count, was only about 2.9% adult content. So the base model could technically generate nudity. But its sense of human anatomy in those contexts was thin, and the filter usually got in the way anyway.

Then Stable Diffusion 2.0 dropped on November 24, 2022. The release stripped many NSFW concepts out of the training data altogether. The community blew up. Emad Mostaque, then-CEO of Stability AI, tried to explain. You can't have kids and NSFW in the same open model, he argued, because that combination opens a clean path to CSAM. The community heard censorship and not much else. Within weeks the internet was full of pipelines and fine-tuned checkpoints meant to put back what Stability had taken out.

Unstable Diffusion is the most visible piece of that pushback. The Discord crew focused on collecting volunteer-curated NSFW datasets and fine-tuning Stable Diffusion in directions Stability AI would never touch. The unstability.ai product followed the same logic but wrapped it in a hosted web app with paid tiers. Either way, underneath, you get the same latent diffusion architecture. What changes is the dataset. The safety layer. And the business model bolted on top.

Discord Origins, Kickstarter Ban, and the Patreon Pivot

The Unstable Diffusion subreddit went up in August 2022. Just weeks after Stable Diffusion 1.4 was open-sourced. The action moved to Discord almost overnight. By a TechCrunch profile on November 17, 2022, the server had hit roughly 50,000 members. Six weeks later it was past 97,000. Third-party Discord trackers in 2025 claimed numbers as high as 344,000, but those come from listing sites rather than Discord itself, so the figure is soft.

A Patreon page went live September 13, 2022. It peaked around $2,500 a month by late 2022. Then it just sat there. As of April 2026, Graphtreon shows the page sitting at roughly $1,998 a month from 149 paid patrons. That puts it 336th on Graphtreon's "Adult Writing" leaderboard. Twenty percent below the 2022 peak, even though the AI-generated adult content market has since ballooned to an estimated $2.5 billion inside an online adult entertainment market of around $73.6 billion. So the story isn't "early mover crushes the field" anymore. It's "early mover, slow flatline."

The Kickstarter chapter is the one everyone remembers. Campaign launched in December 2022 with a $25,000 goal. It cleared that goal inside a day. By December 21, 2022, when Kickstarter pulled the plug, 867 backers had pledged about $56,000. Kickstarter CEO Everette Taylor wrote a statement saying that "Kickstarter must, and will always be, on the side of creative work and the humans behind that work." All-or-nothing model means every dollar got refunded. Arman Chaudhry's reply was blunt: "While Kickstarter's capitulation to a loud subset of artists disappoints us, we and our supporters will not back down."

The team pivoted back to Patreon and added direct Stripe donations. Together those raised maybe $26,000 cumulatively. But the bigger story showed up years later. On May 23, 2025, Visa and Mastercard's processor cut service to CivitAI, the largest hub for NSFW AI checkpoints, and the site moved onto crypto rails like USDC and ETH. Same pattern Unstable Diffusion hit in 2022. Three years on. At several times the scale.

The table below sums up Unstable Diffusion's funding history.

| Date | Source | Amount | Status |

|---|---|---|---|

| Sept 13, 2022 | Patreon launch | up to ~$2,500/mo by late 2022 | Active |

| Dec 2022 | Kickstarter | $56,000 from 867 backers (goal $25K) | Suspended Dec 21, 2022; refunded |

| 2023 | Stripe direct donations | ~$26,000 cumulative | Active |

| Apr 2026 | Patreon today | ~$1,998/mo from 149 patrons | Active, ranked #336 in Adult Writing |

| 2023-2026 | unstability.ai subscriptions | Undisclosed | Active credit-based tiers |

How the Unstable Diffusion Model Generates NSFW Images

Under the hood, both flavors of Unstable Diffusion run the same latent diffusion pipeline as base Stable Diffusion. The user types a prompt. The text becomes an embedding that points the model toward a region of its learned image space. The model then iteratively denoises a random latent image, step by step, until it matches the prompt closely enough to stop. To produce the final image typically takes 20 to 50 denoising steps, depending on the speed-versus-quality preset chosen.

So if the architecture is the same, what actually differs from base Stable Diffusion? Three things, in roughly this order of importance.

First, the dataset. Stability AI fine-tuned mostly on safe-for-work content and stripped much of the adult imagery from its training corpus. Unstable Diffusion's team has built and maintained a dataset of more than 30 million adult images, sourced through volunteer curation. That fixes the anatomy gap and the genre coverage that vanilla Stable Diffusion was weak at out of the box.

Second, the filter. Stable Diffusion checkpoints from Stability AI ship with a CLIP-based safety classifier that flags and blurs unsafe outputs by default. Unstable Diffusion derivatives strip that classifier or wave it off. On unstability.ai, the default filter gets replaced with an age-verification gate that only triggers when the user explicitly asks for adult content. Anything SFW just runs.

Third, the guardrails. Even the most permissive forks try to block clearly illegal content. The standing policy: anything depicting minors or non-consenting people gets rejected at the prompt level and again via post-generation moderation. In practice? Depends on the operator. Some moderate aggressively. Others mostly do not.

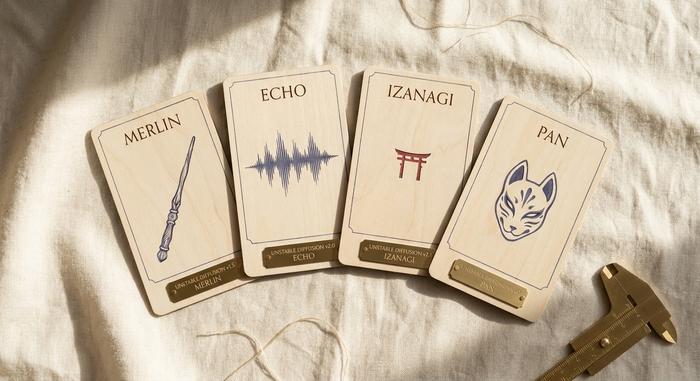

Inside the Model Lineup: Merlin, Echo, Izanagi, Pan

The branded lineup is mostly an unstability.ai thing, but it is the most public product face the brand carries today. Instead of versioned releases like Stable Diffusion 1.5 or SDXL 1.0, the platform groups its checkpoints by stylistic intent.

| Model | Designed for | Notes |

|---|---|---|

| Merlin | General-purpose generation | Default option, balanced realism and stylization |

| Echo | Photorealistic portraits and product shots | Best for human likenesses and skin detail |

| Izanagi | Anime and manga art | Tuned on illustrated and stylized references |

| Pan | Anthropomorphic and furry art | Niche, but heavily requested by the community |

There is also a parallel speed lineup. Unstable Diffusion v2.6 is the speed-tuned default at 6 to 8 seconds per image. Unstable Diffusion XL takes 12 to 15 seconds for higher resolution. Unstable Diffusion Photoreal handles portrait work. None of these inherits directly from the original Discord community fine-tunes, even though the marketing leans hard on the shared brand. To judge output quality, the cleanest test is a side-by-side against base SDXL or a similarly-tuned popular CivitAI checkpoint.

Pricing, Credits, and Platform Access for Creators

Pricing for the unstability.ai web platform follows a familiar SaaS pattern. There is a free tier with daily credits, paid tiers that unlock unlimited generations and commercial use, and a top tier that adds private generation history. Generation speed is gated by a "fast credits" pool that refills monthly.

| Tier | Cost / month | Daily credits | Fast credits / month | Commercial use |

|---|---|---|---|---|

| Free | $0 | Limited daily allowance | None | No |

| Basic | $14.99 | 150 | 1,000 | No |

| Premium | $29.99 | Unlimited | 3,000 | Yes |

| Pro | $59.99 | Unlimited | 6,000 | Yes (private data) |

Discord access for the original community is separate. The Discord remains free to join but operates as a chat server, not a generation backend. Models trained or shared in the community typically run locally on the user's own GPU, via tools like AUTOMATIC1111's Stable Diffusion WebUI, ComfyUI, or InvokeAI, or get uploaded to checkpoint hubs like CivitAI for download. There is no central paywall on the community side.

For most creators looking at this in 2026, the practical choice is between three paths. Pay a subscription on a hosted uncensored platform. Run an open checkpoint locally on a 12 GB or 16 GB consumer GPU. Or use a CivitAI-style hub that bundles many fine-tuned models with a generous free tier and a credit system on top. Each path has tradeoffs in privacy, speed, model variety, and content policy.

Uncensored AI Content Controversy: Ethics and CSAM

You can't honestly write about Unstable Diffusion without the ethics layer. Three problems keep showing up. Every one has a real incident attached.

Take non-consensual imagery first. The defining case is the Atrioc scandal of January 30, 2023. Twitch streamer Brandon Ewing accidentally exposed a browser tab during his own livestream. The tab? A paid site selling deepfake porn of his colleagues. Pokimane. QTCinderella. Maya Higa. Sweet Anita. He apologized on camera the next day. He also reportedly wired $60,000 to cover legal takedowns for the affected streamers. Twitch quietly updated its terms in March 2023 to permanently ban anyone making deepfake content. One incident did more to drag open-source diffusion into mainstream news than any technical policy debate ever had.

Now the dataset issue. In December 2023, Stanford Internet Observatory ran the LAION-5B training set through PhotoDNA. They flagged 1,008 verified child sexual abuse images sitting inside it. LAION-5B was the same dataset Stable Diffusion 1.5 trained on. LAION pulled it and shipped a scrubbed Re-LAION-5B in August 2024. Trouble is, every model trained on the original sits downstream of that contamination. SD 1.5 included. So does the entire NSFW fine-tune ecosystem built on top of it. Some forks have done the work to retrain or scrub. Others just kept shipping. If you're a buyer, look up what dataset a given checkpoint actually trained on. Don't take the operator's word for it.

The third issue is the regulatory shadow. Every major payment processor in 2026 treats AI-generated adult imagery as high-risk. CivitAI's card processor pulled service on May 23, 2025 over NSFW exposure, and the site jumped to crypto rails overnight. Stability AI updated its Acceptable Use Policy on July 31, 2025 to bar explicit generation on its current models. The new policy doesn't retroactively cover SD 1.5 or SDXL though, which remain the backbone of the NSFW community. The EU AI Act and a growing list of U.S. state laws now require disclosure when AI-generated content depicts realistic humans. So anyone using uncensored AI commercially today is operating inside a legal frame that did not exist when Unstable Diffusion launched.

This stuff doesn't kill the technology. It just means the people building, hosting, and using it now carry the consent, dataset hygiene, and disclosure questions that the early phase mostly waved off.

Alternatives to Unstable Diffusion in 2026: FLUX, Pony, Kling

Unstable Diffusion is no longer the center of gravity. The model and platform map has reshuffled dramatically since the Discord scandal of 2022. The people doing serious uncensored AI work in 2026 look elsewhere first. The strongest alternatives split into three buckets.

Bucket one: open-weight uncensored checkpoints you run on your own machine. Pony Diffusion V6 XL hit CivitAI in January 2024 and quickly became the default NSFW SDXL fine-tune. It was built and distributed entirely outside the Unstable Diffusion pipeline. Pony plus its newer Illustrious sibling now dominate the stylized adult genre. Anime-trained checkpoints and various SDXL fine-tunes fill the same niche with different aesthetics. To run any of them comfortably you want a local GPU with at least 12 GB of VRAM.

Bucket two: the next generation of base models. FLUX.1 from Black Forest Labs landed in August 2024 and reset the bar for prompt adherence and photorealism. Its open-weight FLUX.1-dev variant pulled in NSFW LoRAs from the community within weeks. Stability AI shipped Stable Diffusion 3 in February 2024 and the bigger Stable Diffusion 3.5 in October 2024. SD3's well-reported anatomy bugs slowed adoption inside the uncensored crowd. Stability AI itself has backed off. Emad Mostaque resigned March 23, 2024 amid a financial crunch. The July 2025 policy update now formally forbids explicit generation on its current models.

Bucket three: video. The image-only era of generative AI is partly behind us. Kling, Runway Gen-3 and Gen-4, OpenAI's Sora, Google's Veo. They have all pushed the frontier into video, and several allow adult content with age verification on third-party hosts. Unstable Diffusion has never made a comparable jump into video. Part of why its cultural weight has quietly shrunk since 2023.

The table below compares the main options.

| Tool | Type | Local or hosted | NSFW capable | Best for |

|---|---|---|---|---|

| Unstable Diffusion (Discord) | Community + checkpoints | Local | Yes | Free, DIY |

| Unstability.ai | Web platform | Hosted | Yes (gated) | Easy uncensored hosting |

| Pony Diffusion v6 + | Open checkpoint | Local | Yes | Stylized adult art |

| FLUX.1-dev | Base model + fine-tunes | Local or hosted | With fine-tune | Best 2024-era quality |

| SDXL + CivitAI checkpoints | Base + community | Local or hosted | With fine-tune | Variety of styles |

| Kling 2.0 | Video generator | Hosted | Limited, gated | Short uncensored clips |

The right choice depends on how much GPU you have, how much you care about hosting versus DIY, and whether you need still images or video. None of these is a one-to-one replacement for the original Unstable Diffusion experience. The space has fragmented, and the brand no longer dominates the way it did during the Kickstarter year.