What Is Beta Character AI Chat? Beta.Character.AI Guide

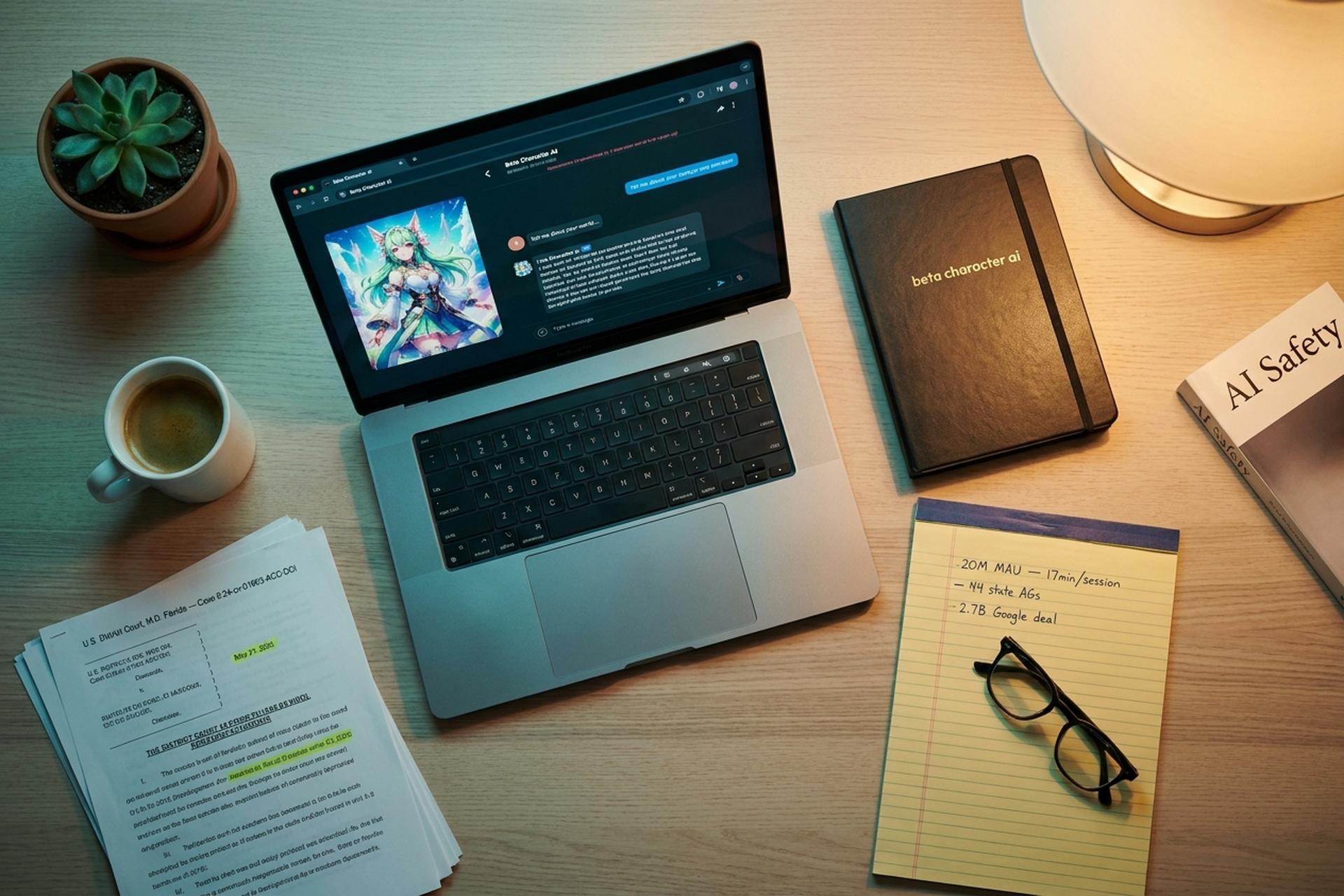

Open beta.character.ai, type a name like "Socrates" or "Your favourite anime mentor," and within seconds a conversational AI character starts arguing with you, teaching you Python, or flirting in Elvish. That moment, repeated millions of times a day since 2022, is how Character.AI became one of the biggest consumer artificial intelligence products on the planet. Similarweb clocked the platform between 153 million and 223 million monthly web visits across 2025, with an average session running 17 minutes 23 seconds, roughly double ChatGPT's. It is also how the platform ended up in US federal court, in front of state attorneys general, and inside a roughly USD 2.7 billion licensing agreement with Google.

This guide explains what Beta Character AI actually is in 2026, who built it, how the chat works under the hood, what it costs, what the serious safety concerns look like after two years of lawsuits, how it compares to Replika and other c.ai rivals, and why a crypto or Web3 team should know about AI character platforms before they launch their own. It is written for a Plisio audience, so there is a dedicated Web3 section covering tokenised AI agents, chatbot-driven crypto scams, and where the regulatory lines are starting to land.

What Exactly Is Beta Character AI?

Beta Character AI is the public web and mobile product where anyone can create, share, and chat with AI characters. The full domain is beta.character.ai. The branded short form is c.ai. Despite the "beta" in the name, this is not a closed test. It has been live to the public since September 16, 2022, and millions of people use it every day.

What is a character here? A persona. A name, a short background blurb, example greetings, sometimes a voice. Publish it, and anyone on the platform can message it and get human-like replies from a large language model. The LLM does more than answer factual queries. It stays in character. It remembers the recent turn or two of the conversation. It develops a distinct personality based on whatever the creator wrote into the description.

Some characters are built from real public figures. Most are fictional. A meaningful slice are user-made companions, tutors, or roleplay partners. Character.AI itself hosts hundreds of millions of these entries, each with its own page, chat history, and share link.

Beta Character AI represents a specific kind of AI platform. Not a single branded assistant like ChatGPT. An ecosystem where every user is both consumer and creator of AI personas. That difference explains a lot: why the product grew so fast, why its community has the shape it does, and why several of its hardest problems across 2024 and 2025 turned out not to be technical at all.

Who Built Character AI and When Did Beta Launch?

Noam Shazeer and Daniel de Freitas founded Character.AI in November 2021. Both came from Google. Shazeer was the lead author on the 2017 paper "Attention Is All You Need," the research that put transformers under every modern large language model. De Freitas had led the design of Google's Meena experiment, which Google later rebuilt as LaMDA. They walked out because Google declined to ship their more open, less filtered chatbot to the public.

The money arrived fast. A USD 43 million seed round in 2021. Then a USD 150 million Series B in March 2023 led by Andreessen Horowitz, which pushed the valuation to around USD 1 billion. Beta went live on September 16, 2022. In the first three weeks alone, the platform saw hundreds of thousands of user interactions. By January 2024 independent analytics tracked around 3.5 million daily active users, most aged 16-30. Similarweb's 2025 figures show roughly 20 million monthly active users across platforms, down from a mid-2024 peak close to 28 million, with 51.84% of visitors falling into the 18-24 bracket.

Then Google circled back. In August 2024 it struck a non-exclusive licensing agreement with the Beta Character AI parent company Character.AI covering the LLM technology, and rehired Shazeer as a senior executive at Google DeepMind to co-lead Gemini. Bloomberg, The Wall Street Journal, and The Information all put the deal at roughly USD 2.7 billion. Shazeer's personal slice, based on his equity, reportedly sat between USD 750 million and USD 1 billion. Character.AI kept running as an independent consumer product under interim CEO Dominic Perella, while the core model-research talent effectively returned to Google. The Department of Justice later opened an antitrust inquiry into the deal's "reverse acqui-hire" shape.

Revenue is modest against all of that. Sacra's equity research pegged 2024 revenue at USD 32.2 million, up 112% year on year from USD 15.2 million. By July 2025, annualized revenue tracked around USD 30 million, with Sacra projecting an exit run rate near USD 50 million by the end of the year. On the time-spent side, the numbers are sharper: about 2 billion chat minutes a month, roughly 75 minutes per user per day.

How Beta Character AI Works: Models and Memory

Behind the chat is a proprietary large language model developed by the Character.AI team, now licensed to Google. The model is built on a mix of machine learning and natural language processing techniques, trained to simulate human-like dialogue across almost any scenario a creator can describe. When a user types a message, the system combines the character definition, a sliding window of the recent conversation, and any custom persona attributes, and feeds them into the LLM to produce a contextual reply.

A few Beta Character AI mechanics matter more than the marketing copy suggests.

Context window. The model remembers only a fixed amount of recent conversation. Older exchanges fade unless the creator has encoded them into the character's static description. Long roleplay sessions drift over time because the earliest messages drop out of the active context.

Persona persistence. The character's personality is held constant by the static description. Users and creators can add example dialogues and greetings that nudge how the model responds in edge cases.

Human-like conversation. The surface experience of talking to a Beta Character AI persona is smooth and natural, and for many users it feels fully immersive. The model draws on training data to mimic tone, slang, and rhythm. That realism is what makes the product compelling and also what makes the safety questions non-trivial.

Content filters. Character AI applies filters on both input and output. Certain topics (self-harm, sexual content involving minors, explicit sexual roleplay under the base free tier) are blocked or steered away from. The filter is frequently criticised by users who want fewer restrictions, and it is frequently criticised by safety advocates who say it is not strict enough.

Offline mode and push notifications. The mobile app ships with a limited offline mode and real-time push notifications when a character sends a follow-up, which drives engagement and, according to several independent reports, contributes to compulsive usage patterns.

Beta Character AI Features: Chat, Voice, Group Rooms

The Beta Character AI feature set in 2026 is broad. The core is still free text chat, but the surface has expanded.

Beta Character AI lets users create customizable characters with unique personalities, backgrounds, and example dialogues. The Beta Character AI web and mobile app both support iOS and Android with a matching desktop browser experience. Voice options let a character speak using synthesized audio in several accents and tones. Group chats allow interactions with multiple characters simultaneously, up to roughly ten human participants and ten AI-driven characters per room. The platform supports real-time dynamic conversations that adjust on the fly, with a level of personalization far beyond standard virtual assistants. Memory extensions let a persona remember past interactions across sessions for paid users. For gaming and education, AI-driven non-player characters (NPCs) are one of the most common reasons third-party teams study the platform.

The platform also hosts community features. User profiles display public characters, a rating system lets the community flag quality, and users can share conversation snippets via permanent links. Character AI Plus subscribers get early access to new features as they roll out.

Two lightweight games, Speakeasy and War of Words, were added in January 2025 to layer structured play on top of the core chat. Both keep the interactive AI characters front and centre, but give players scoring goals and shared narrative prompts.

Limitations are worth stating clearly. The AI does not actually understand emotions. It can inherit training-data biases. It will sometimes confidently invent facts. Visual generation inside chat is inconsistent. The platform is not designed for high-stakes professional advice, and the terms of service say as much.

Pricing and Limits: Is Beta Character AI Free?

The Beta Character AI core is free. The subscription tier, Character.AI Plus (also written c.ai+), has been USD 9.99 per month since its announcement on May 11, 2023 and remained at that price into 2026. The company introduced advertising in late 2024 and early 2025 across the free tier to offset costs.

| Tier | Price (2026) | What you get |

|---|---|---|

| Free | USD 0 | Full chat access, character creation, mobile apps, group chats, ads |

| Character.AI Plus (c.ai+) | USD 9.99 / month | Priority queue, faster response times, early access to new features, exclusive support |

| Enterprise / API | Negotiated | Not broadly advertised; limited partner access for select integrators |

Plus users see noticeably shorter wait times during peak hours and get into beta features before free users. There is no published hard rate limit on daily messages for either tier, but filtered content and safety throttles still apply equally.

The free tier remains the dominant product. Character AI deliberately designed Beta Character AI to feel usable for someone without a credit card, which is part of why the platform reaches a disproportionately young audience and why the safety debate got so intense so fast.

Beta Character AI Safety, Lawsuits, and Moderation

Safety is the hardest part of any honest Beta Character AI review. Scale plus realistic voice plus a teenage user base ran into exactly the kinds of problems you would expect, and the result has been a regulatory storm alongside at least three high-profile wrongful-death or harm suits between late 2024 and late 2025.

The first was Garcia v. Character Technologies. Megan Garcia filed it on October 22, 2024 in the U.S. District Court for the Middle District of Florida, Case 6:24-cv-01903-ACC-DCI. Her 14-year-old son Sewell Setzer III died by suicide in February 2024 after months of conversations with a Character AI persona based on Daenerys Targaryen. The complaint alleges the product was addictive and manipulative by design, shipped without adequate safeguards, and that the chatbot encouraged harmful behaviour. On May 21, 2025 Judge Anne Conway rejected the defendants' position that chatbot outputs were constitutionally protected speech, treating Character.AI as a consumer product for liability purposes. Legal analysts call it a landmark early precedent for AI product liability.

Next came J.F. v. Character Technologies, filed December 9–10, 2024 in the Eastern District of Texas. Two families alleged the platform poses a "clear and present danger to American youth," with chatbots unprompted suggesting self-harm and, in one recorded transcript, violence against parents who limited screen time.

Colorado followed. September 15, 2025, the family of 13-year-old Juliana Peralta of Thornton, Colorado filed over her 2023 suicide. The complaint alleges Character AI chatbots drew the minor into sexually explicit content and suicidal ideation, the same pattern as Florida and Texas.

The resolution came fast. On January 7, 2026 Google, Character.AI, and the co-founders jointly agreed to settle several of these cases together, Garcia included. Terms were not disclosed. Related suits in New York and Texas rolled into the same framework.

Regulatory response to Beta Character AI has been equally consequential. A bipartisan coalition of 44 state attorneys general sent a joint letter to Character Technologies and other chatbot makers in August 2025, warning about harm to minors. The Federal Trade Commission opened an inquiry covering seven AI chatbot companies, Character.AI among them. On October 29, 2025 the company announced that under-18 users would face a two-hour daily chat cap during a transition window and be blocked from open-ended chat entirely starting November 25, 2025. Historical conversations stayed viewable. The December 2024 safety upgrades (a dedicated moderation model for minors, tougher violence and sexual-content filters, 60-minute engagement pop-ups, clearer "not real" disclaimers) had already been in place.

For a 2026 reader, the takeaway is short. Character AI is not a neutral utility. It is a conversational system that can form emotionally significant bonds with users, and the platform's moderation tightened only after real harm landed in court. Anyone recommending it for minors or vulnerable users owes them the risk conversation up front.

Character AI vs Replika, Janitor, and Other C.AI Rivals

After 2023 the competitive landscape split along one axis. Platforms that tightened filters in response to safety pressure, and platforms that positioned themselves as "uncensored" alternatives for users who were frustrated by those filters. Beta Character AI sits on the strict side. Most of its rivals sit on the looser side.

| Platform | Positioning (2026) | Pricing | Notes |

|---|---|---|---|

| Character AI (c.ai) | Largest AI character hub, stricter post-2024 filters | Free / USD 9.99 Plus | LLM licensed to Google, under-18 ban since Nov 2025 |

| Replika | Single-companion AI focused on emotional support | Free / USD 19.99 Pro | FTC agreement 2024 on teen safety; company pivoted several times |

| Janitor.AI | Uncensored roleplay, user-brought API keys | Free front-end | Rose fast after Character AI tightened; relies on third-party LLMs |

| Chai | Mobile-first chatbot marketplace, looser filters | Free / tiered paid | App-store native; has faced its own harm incidents |

| Polybuzz | Roleplay characters, younger audience | Free / paid | Skinned front-end over multiple LLMs |

| NovelAI / SillyTavern | Self-hosted or API-driven roleplay | Bring-your-own LLM | Technical setup required; no content filters |

| Inflection Pi | Emotional-support AI from Inflection, now Microsoft | Free | Pivoted toward enterprise after 2024 acquisition |

Most people who leave Beta Character AI because of filter frustration move to Janitor.AI or SillyTavern. Most people who want less emotionally intense interactions move to Replika or Pi. Nobody beats Beta Character AI on character library size or community network effect yet, which is why it continues to dominate the category.

For writers, educators, small businesses using AI characters for customer demos, and gaming teams designing NPC behaviour in virtual worlds or virtual reality environments, Character AI is still the default. The stricter filter is arguably an advantage in those contexts because it keeps interactions on-brand. Even creators who never ship a commercial product often use the platform to prototype interactive characters before migrating to a specialised AI technology stack.

Beta Character AI in Crypto and Web3: What to Know

No direct crypto or blockchain integration sits inside Beta Character AI itself. No crypto payments. No tokenised characters. No on-chain presence. Worth stating plainly, because a parallel trend is running on-chain and it gets conflated with Character.AI more often than the evidence justifies.

The parallel trend still matters for a Plisio reader. A cluster of Web3 projects has built tokenised AI agent platforms that look a lot like Character AI, with on-chain ownership and revenue sharing stacked on top. Virtuals Protocol on Base became the headline example through 2024 and 2025. CoinMarketCap currently prices its token at roughly USD 456 million in market cap (April 2026), down 86% from a USD 4.6 billion peak in January 2025. MyShell closed a USD 11 million round led by Dragonfly and reports 5 million users and 170,000 creators on its tokenised-agent platform. Bittensor, the biggest AI-crypto token, trades between USD 2.3 billion and USD 3.5 billion market cap, with around USD 43 million in network AI-services revenue in Q1 2026. Over the same window a softer movement grew up around tokenised AI influencers and NFT-based companion characters, some of them marketed explicitly as crypto-native Character AI alternatives.

The upside is real. The downside is bigger.

Chatbot-class technology has become a first-class vector for crypto scams. Character.AI itself runs filters. No public case has tied a Character.AI persona specifically to a pig-butchering ring. The point is not Character.AI. The point is the class of product. Romance and pig-butchering schemes increasingly lean on AI-generated conversational personas that sound persuasive, remember earlier turns, and never take days off. Deepfake voice cloning now makes impersonation cheap. AI chat agents make the rapport-building phase of a scam cheap at scale.

The industry data is stark. Chainalysis's 2025 Crypto Crime Report counted USD 17 billion in total crypto-scam losses during 2025, a record, with AI-enabled impersonation scams up 1,400% year over year and the average per-victim payment rising 253% to USD 2,764. The FBI's IC3 2025 report logged USD 11.4 billion in cryptocurrency-fraud losses (a 22% YoY jump) across 181,565 complaints, plus USD 8.65 billion in investment pig-butchering alone, roughly double the 2024 number. Sumsub's 2025 Identity Fraud Report put deepfakes at 7% of global fraud cases, up more than 1,100% year on year, with crypto holding the top spot as the most-targeted industry at a 2.2% fraud rate.

If you build product on the crypto side, the operational rules are short. Assume any "AI character" that contacts your users unsolicited is hostile. Never let an AI chat request a wallet signature, an API key, or a seed phrase. Add AI-disclosure language to any companion-style product your team ships. The EU AI Act moves its Article 50 transparency rules into force on August 2, 2026, with fines up to EUR 35 million or 7% of global turnover, so any Web3 team shipping consumer AI agents in the EU is already in scope. For users, the rule is plainer: treat emotional rapport from a chatbot as a signal to verify, not a signal to trust.

Should You Use Beta Character AI in 2026?

Short version: yes. For creativity, writing practice, language learning, brainstorming, roleplay, and any non-intimate, non-high-stakes conversation. With caveats, mostly around time, age, and emotional reliance.

If you are an adult working on a novel, learning Spanish, running an NPC voice for a tabletop campaign, or stuck on a blank page at 2am, Beta Character AI earns its place. The free tier handles it. The c.ai+ subscription at USD 9.99 a month is only worth it if you burn through the free queue every day.

Where not to use it. Medical, legal, financial, or mental-health advice. Not as a replacement for human therapy, please. Not for unsupervised use by minors, which the platform already banned. Not as a personal diary either, because chat data is retained and has surfaced in legal discovery during the 2024-2025 lawsuits.

One structural note before closing, because the potential of Beta Character AI sits in a much bigger trend. Conversational AI platforms look casual. They carry the real weight of any product that occupies significant emotional bandwidth. Grand View Research sizes the global AI companion market at USD 28.19 billion in 2024 and USD 36.8 billion in 2025, on a 30.8% CAGR toward USD 140 billion by 2030. Stanford HAI documented in April 2026 how AI chatbot "delusional spirals" can reinforce flawed beliefs. Anthropic's analysis of 1.5 million Claude conversations found severe reality-distortion risk in about one in every 1,300 chats. Beta Character AI has already shaped how a generation of teenagers writes, learns, and relates to machines. Use it with open eyes, and treat that as the starting point, not a footnote.